`, a ``, or a `

`.

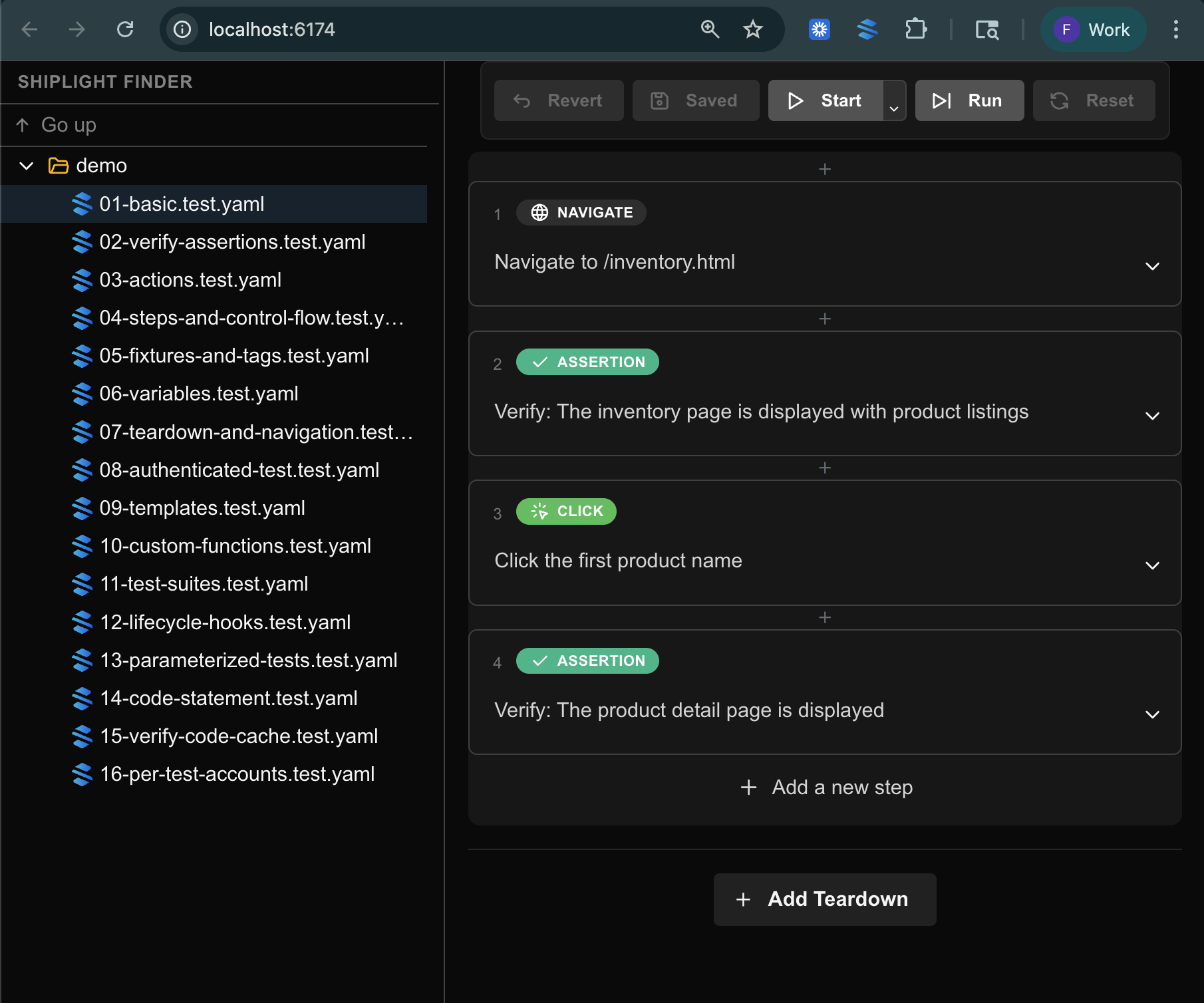

## A Complete YAML Test Example

Here is a full YAML test file for an e-commerce checkout flow, demonstrating the range of actions and assertions available.

```yaml

name: Complete checkout flow

url: https://store.example.com

tags:

- checkout

- critical-path

statements:

- action: CLICK

target: first product card

- action: VERIFY

assertion: product detail page is displayed

- action: CLICK

target: add to cart button

- action: VERIFY

assertion: cart badge shows "1"

- action: CLICK

target: cart icon

- action: VERIFY

assertion: cart contains 1 item

- action: CLICK

target: proceed to checkout

- action: FILL

target: shipping address

value: 123 Test Street, San Francisco, CA 94102

- action: FILL

target: card number

value: "4242424242424242"

- action: FILL

target: expiration date

value: "12/28"

- action: FILL

target: CVV

value: "123"

- action: CLICK

target: place order button

- action: VERIFY

assertion: order confirmation page is displayed

- action: VERIFY

assertion: page contains "Thank you for your order"

- action: VERIFY

assertion: order number is displayed

```

This test will likely survive a complete frontend redesign as long as the checkout flow itself does not change. The equivalent Playwright script would be roughly 60-80 lines of JavaScript with selectors, waits, and assertions.

## Getting Started with YAML Tests

If you are currently writing Playwright scripts and want to try YAML-based testing, you do not need to rewrite everything at once. Shiplight runs alongside your existing test suite through its [plugin system](/plugins).

Start with your most-maintained tests — the ones that break frequently due to UI changes. Convert those to YAML format and let them run in parallel with your existing scripts. For teams generating tests with AI coding agents, YAML is the natural output format. See how this works in the context of [PR-ready E2E tests](/blog/pr-ready-e2e-test).

References: [Playwright Documentation](https://playwright.dev), [YAML Specification](https://yaml.org)

---

### Best AI Testing Tools in 2026: 11 Platforms Compared

- URL: https://www.shiplight.ai/blog/best-ai-testing-tools-2026

- Published: 2026-03-31

- Author: Shiplight AI Team

- Categories: Guides, Engineering

- Markdown: https://www.shiplight.ai/api/blog/best-ai-testing-tools-2026/raw

An honest comparison of 11 AI testing tools — from agentic QA platforms to visual testing. Includes pricing, pros/cons, and a practical selection guide.

Full article

The AI testing tools market was valued at $686.7 million in 2025 and is projected to reach $3.8 billion by 2035. The space is crowded — and choosing the right platform matters more than ever.

We build [Shiplight AI](https://www.shiplight.ai/plugins), so we have a perspective. Rather than pretend otherwise, we'll be transparent about where each tool shines and where it falls short. This guide is designed to help you make a decision, not just read a marketing list.

Here's what we evaluated: self-healing capability, test generation approach, CI/CD integration, learning curve, pricing model, and support for AI coding agent workflows.

## The 3 Types of AI Testing Tools

Before diving into individual tools, it helps to understand the landscape. AI testing tools in 2026 fall into three categories:

### Agentic QA Platforms

These tools use AI to autonomously generate, execute, and maintain tests. They interpret intent rather than relying on brittle DOM selectors. Tests adapt when the UI changes without manual intervention.

Examples: Shiplight AI, Mabl, testRigor, QA Wolf

### AI-Augmented Automation Platforms

Traditional test automation frameworks enhanced with AI features like self-healing locators, smart element recognition, and assisted test authoring. You still write scripts, but AI reduces the maintenance burden.

Examples: Katalon, Testim (Tricentis), ACCELQ, Functionize, Virtuoso QA

### Visual & Specialized AI Testing

AI applied to specific testing domains — visual regression, accessibility, or screenshot comparison. These complement full E2E platforms rather than replacing them.

Examples: Applitools, Percy, Checksum

## Quick Comparison Table

| Tool | Category | Best For | Self-Healing | No-Code | CI/CD | AI Agent Support | Pricing |

|------|----------|---------|-------------|---------|-------|-----------------|---------|

| **Shiplight AI** | Agentic QA | AI-native teams using coding agents | Yes (intent-based) | Yes (YAML) | CLI, any CI | Yes (MCP) | Contact |

| **Mabl** | Agentic QA | Low-code E2E with auto-healing | Yes | Yes | Built-in | No | From ~$60/mo |

| **testRigor** | Agentic QA | Non-technical testers | Yes | Yes | Yes | No | From ~$300/mo |

| **Katalon** | AI-Augmented | All-in-one mixed skill teams | Partial | Partial | Yes | No | Free tier; from ~$175/mo |

| **Applitools** | Visual AI | Visual regression testing | N/A | Yes | Yes | No | Free tier; from ~$99/mo |

| **QA Wolf** | Agentic (Managed) | Fully managed QA service | Yes | N/A (managed) | Yes | No | Custom |

| **Functionize** | AI-Augmented | Enterprise NLP-based testing | Yes | Yes | Yes | No | Custom |

| **Testim** | AI-Augmented | Fast web test creation | Partial | Partial | Yes | No | Free community; enterprise varies |

| **ACCELQ** | AI-Augmented | Codeless cross-platform | Yes | Yes | Yes | No | Custom |

| **Virtuoso QA** | AI-Augmented | Enterprise Agile/DevOps | Yes | Yes | Yes | No | Custom |

| **Checksum** | AI Generation | Session-based test creation | Yes | Yes | Yes | No | Custom |

## The 11 Best AI Testing Tools in 2026

### 1. Shiplight AI

**Category:** Agentic QA Platform

**Best for:** Teams building with AI coding agents (Claude Code, Cursor, Codex) who want verification integrated into development

Shiplight connects to AI coding agents via [Shiplight Plugin](https://www.shiplight.ai/plugins) (Model Context Protocol), enabling the agent to open a real browser, verify UI changes, and generate tests during development — not after. Tests are written in [YAML with natural language intent](https://www.shiplight.ai/yaml-tests), live in your git repo, and self-heal when the UI changes.

**Key features:**

- [Shiplight Plugin](https://www.shiplight.ai/plugins) for Claude Code, Cursor, and Codex with built-in [agent skills](https://agentskills.io/) for verification, test generation, and automated reviews

- Intent-based YAML tests (human-readable, reviewable in PRs)

- Self-healing via cached locators + AI resolution

- Built on Playwright for cross-browser support

- Email and authentication flow testing

- SOC 2 Type II certified

**Pros:** Tests live in your repo and run in Shiplight Cloud — portable, no lock-in, works inside AI coding workflows, near-zero maintenance, enterprise-ready security

**Cons:** Newer platform with a smaller community than established tools, no self-serve pricing page

**Pricing:** Shiplight Plugin is free (no account needed). Platform pricing requires contacting sales.

**Why we built it:** AI coding agents generate code fast, but there was no testing tool designed to work inside that loop. We built Shiplight to close the gap between "code written" and "code verified."

### 2. Mabl

**Category:** Agentic QA Platform

**Best for:** Teams wanting low-code E2E testing with strong auto-healing and cloud-native execution

Mabl is a mature, cloud-native platform that uses AI to create, execute, and maintain end-to-end tests. It offers auto-healing, cross-browser testing, API testing, and visual regression in a single platform.

**Key features:** AI-driven test creation, auto-healing, cross-browser, API testing, visual regression, performance testing

**Pros:** Mature and well-integrated, good documentation, strong cloud-native architecture

**Cons:** Can become expensive at scale, no AI coding agent integration, tests live on Mabl's platform

**Pricing:** Starts around $60/month (starter); enterprise pricing varies

### 3. testRigor

**Category:** Agentic QA Platform

**Best for:** Non-technical testers who want to write tests in plain English without any coding

testRigor takes "no-code" to its logical conclusion — tests are written entirely in plain English from the end user's perspective. No XPath, no CSS selectors, no Selenium. The platform supports web, mobile, API, and desktop testing.

**Key features:** Plain English test authoring, generative AI test creation, cross-platform support (web, mobile, desktop)

**Pros:** Truly accessible to non-engineers, broad platform support, active development

**Cons:** Less developer-oriented than code-based tools, proprietary test format (tests aren't portable)

**Pricing:** Starts around $300/month

### 4. Katalon

**Category:** AI-Augmented Automation

**Best for:** Teams at mixed skill levels who need a comprehensive all-in-one platform

Katalon covers web, mobile, API, and desktop testing in a single platform. Named a Visionary in the Gartner Magic Quadrant, it balances accessibility for non-technical users with extensibility for developers.

**Key features:** Web/mobile/API/desktop testing, AI-assisted test authoring, Gartner-recognized, built-in reporting

**Pros:** Comprehensive platform, strong community, free tier available, Gartner recognition

**Cons:** Heavier platform with steeper learning curve, AI features feel bolted-on rather than core architecture

**Pricing:** Free basic tier; Premium from approximately $175/month

### 5. Applitools

**Category:** Visual AI Testing

**Best for:** Visual regression testing and cross-browser UI validation

Applitools specializes in visual AI — trained on millions of screenshots to detect layout shifts, visual bugs, and cross-browser inconsistencies. It integrates with Selenium, Cypress, and Playwright as an assertion layer.

**Key features:** Visual AI screenshot comparison, cross-browser layout testing, integration with major test frameworks

**Pros:** Best-in-class visual testing accuracy, broad framework integrations, strong track record

**Cons:** Focused on visual layer only — not a full E2E testing solution. You still need another tool for functional testing.

**Pricing:** Free tier available; paid plans from approximately $99/month

### 6. QA Wolf

**Category:** Agentic QA (Managed Service)

**Best for:** Teams that want to outsource QA entirely with guaranteed 80% automated coverage

QA Wolf is unique — it's a managed QA service, not just a tool. Their team of QA engineers builds, runs, and maintains Playwright-based tests for you. They guarantee 80% automated E2E coverage within 4 months. The AI Code Writer is trained on 700+ scenarios from 40 million test runs.

**Key features:** Managed QA service, AI-generated Playwright tests, dedicated QA engineers, zero flaky tests guarantee

**Pros:** Eliminates internal QA burden, fast ramp-up, tests are open-source Playwright code (you own them)

**Cons:** Higher cost than self-serve tools, less control over test authoring decisions

**Pricing:** Custom pricing (managed service model)

### 7. Functionize

**Category:** AI-Augmented Automation

**Best for:** Enterprise teams wanting NLP-based test creation with high element recognition accuracy

Functionize uses natural language processing to let non-technical users write tests in plain English, with machine learning-powered element recognition that the company claims achieves 99.97% accuracy.

**Key features:** NLP test authoring, ML element recognition, self-healing, enterprise-grade infrastructure

**Pros:** High element recognition accuracy, enterprise-ready, accessible to non-engineers

**Cons:** Enterprise pricing excludes smaller teams, less suited for fast-moving startup workflows

**Pricing:** Custom enterprise pricing

### 8. Testim (Tricentis)

**Category:** AI-Augmented Automation

**Best for:** Web application functional testing with fast test creation via record-and-playback

Testim uses AI to stabilize recorded tests — when DOM structures change, the platform identifies updated attributes and adjusts selectors to prevent flaky failures. Acquired by Tricentis, it now has enterprise backing and integration with the broader Tricentis ecosystem.

**Key features:** Record-and-playback with AI stabilization, smart locators, reusable components, Tricentis integration

**Pros:** Fast test creation, reduces flaky tests by up to 70%, enterprise backing via Tricentis

**Cons:** Record-and-playback has limitations, generated code can't be exported, some users report self-healing doesn't always work as advertised

**Pricing:** Free community edition; enterprise pricing varies

### 9. ACCELQ

**Category:** AI-Augmented Automation

**Best for:** Codeless automation across web, mobile, API, and packaged applications (Salesforce, SAP)

ACCELQ is a cloud-based codeless platform with broad coverage — web, mobile, API, database, and enterprise apps like Salesforce and SAP. Its AI features include self-healing locators and intelligent test generation.

**Key features:** Codeless automation, self-healing, unified platform for web/mobile/API/packaged apps

**Pros:** Broad platform coverage including enterprise apps, truly codeless, cloud-based

**Cons:** Less focus on modern AI coding agent workflows, enterprise-oriented pricing

**Pricing:** Custom pricing

### 10. Virtuoso QA

**Category:** AI-Augmented Automation

**Best for:** Enterprise teams scaling QA in Agile and DevOps environments

Virtuoso combines NLP test authoring with self-healing execution, visual regression, and API testing. It positions itself as the most advanced no-code platform for enterprise teams, with strong Agile/DevOps integration.

**Key features:** NLP test authoring, self-healing, visual regression, API testing, enterprise-grade infrastructure

**Pros:** Enterprise-ready, good NLP capabilities, comprehensive testing coverage

**Cons:** Enterprise pricing limits accessibility, steeper learning curve for advanced features

**Pricing:** Custom enterprise pricing

### 11. Checksum

**Category:** AI Test Generation

**Best for:** Teams wanting E2E tests generated from real production user sessions

Checksum takes a different approach — instead of writing tests or recording them, it generates tests from actual user sessions in production. AI maintains these tests as the application evolves.

**Key features:** Test generation from production sessions, AI maintenance, behavior-based coverage

**Pros:** Tests reflect real user behavior (not hypothetical flows), low effort to create initial coverage

**Cons:** Requires production traffic to generate tests (not useful for pre-launch), newer platform

**Pricing:** Custom pricing

## How to Choose the Right AI Testing Tool

### By Team Size

- **Startups and small teams:** Shiplight, testRigor — fast setup, low overhead, focused on velocity

- **Mid-market:** Mabl, Katalon, Testim — balance of features, support, and established track records

- **Enterprise:** Virtuoso, Functionize, ACCELQ, QA Wolf — managed services, enterprise security, broad platform coverage

### By Use Case

- **AI coding agent workflows (Cursor, Claude Code, Codex):** Shiplight — the only tool with Shiplight Plugin

- **Visual regression testing:** Applitools — best-in-class visual AI

- **Non-technical testers:** testRigor — plain English test authoring

- **All-in-one platform:** Katalon — web, mobile, API, desktop in one tool

- **Fully managed QA:** QA Wolf — outsource the entire testing process

### By Budget

- **Free tiers available:** Katalon (free basic), Applitools (free tier), Testim (community edition), Shiplight (free Shiplight Plugin)

- **Mid-range ($60–$300/month):** Mabl, testRigor

- **Enterprise/custom:** QA Wolf, Functionize, Virtuoso, ACCELQ

## What Makes AI Testing Different from Traditional Automation

Traditional test automation tools like Selenium and Cypress require developers to write and maintain test scripts manually. When the UI changes, tests break. Teams spend up to 60% of their time maintaining existing tests rather than writing new ones.

AI testing tools address this with three capabilities that traditional tools lack:

1. **Self-healing:** AI adapts to UI changes automatically. Instead of brittle CSS selectors, tools use intent-based resolution, visual recognition, or smart locator strategies to find elements even when the DOM changes.

2. **Natural language authoring:** Write tests in plain English or YAML rather than code. This makes testing accessible to PMs, designers, and QA engineers who don't write Playwright or Selenium scripts.

3. **Autonomous maintenance:** AI detects when tests need updating, fixes them proactively, and reduces the maintenance tax that makes traditional automation unsustainable at scale.

The AI testing tools market is growing at approximately 18% CAGR — a signal that these capabilities are moving from "nice to have" to table stakes.

## Frequently Asked Questions

### What is the best free AI testing tool?

Katalon offers the most comprehensive free tier (web, mobile, API testing). Applitools has a free tier for visual testing. Testim offers a free community edition. Shiplight Plugin is free with no account required — ideal for teams using AI coding agents.

### What is the best AI testing tool for startups?

Shiplight and testRigor are designed for fast-moving teams. Shiplight is best if you're building with AI coding agents (Claude Code, Cursor). testRigor is strongest for non-technical team members who want to write tests in plain English.

### Can AI testing tools replace manual QA?

Not entirely. AI testing tools can reduce manual regression testing by 80–90%, but manual exploratory testing — finding unexpected bugs by creative investigation — remains valuable. The best approach combines AI-automated regression with targeted manual exploration.

### Do AI testing tools work with Playwright, Selenium, and Cypress?

Most integrate with existing frameworks. Shiplight and QA Wolf are built on Playwright. Applitools integrates with all three. Katalon supports Selenium-based execution. The trend is toward Playwright as the foundation, with AI layered on top.

### What is self-healing test automation?

Self-healing tests automatically adapt when UI elements change — instead of failing because a button's CSS class changed from `btn-primary` to `btn-main`, the AI identifies the element by intent (e.g., "the Submit button") and continues the test. This eliminates the #1 maintenance cost in traditional automation.

### What is agentic QA testing?

Agentic QA uses AI agents that autonomously create, execute, and maintain tests. Unlike traditional tools where humans write scripts, agentic platforms explore applications, generate test coverage, and self-heal — with minimal human intervention. Shiplight, Mabl, testRigor, and QA Wolf fall into this category.

## Final Verdict

There is no single "best" AI testing tool — it depends on your team, workflow, and priorities. Here's our honest recommendation:

- **If you build with AI coding agents** (Claude Code, Cursor, Codex) and want testing integrated into your development loop, [Shiplight AI](https://www.shiplight.ai/demo) is designed for exactly this workflow. Tests live in your repo as YAML (with optional Shiplight Cloud execution), self-heal, and are reviewable in PRs.

- **If you want a comprehensive, established platform** with broad coverage and a free tier, Katalon is the safest bet for teams at mixed skill levels.

- **If visual regression is your primary concern**, Applitools is the clear leader with best-in-class visual AI.

- **If you want fully managed QA**, QA Wolf removes the testing burden entirely with a dedicated team and coverage guarantee.

- **If non-technical testers contribute to QA**, Shiplight's YAML tests are readable by anyone on the team, while testRigor's plain English approach has the lowest barrier to entry.

The AI testing space is evolving rapidly. Whichever tool you choose, the key question isn't "does it have AI?" — every tool claims that now. The question is: **does it reduce the time your team spends on test maintenance, and does it fit into the way you already build software?**

## Get Started

- [Try Shiplight Plugin — free, no account needed](https://www.shiplight.ai/plugins)

- [Book a demo](https://www.shiplight.ai/demo)

- [YAML Test Format documentation](https://www.shiplight.ai/yaml-tests)

- [Shiplight Documentation](https://docs.shiplight.ai)

References: [Playwright Documentation](https://playwright.dev), [Gartner AI Testing Reviews](https://www.gartner.com/reviews/market/ai-augmented-software-testing-tools), [Google Testing Blog](https://testing.googleblog.com/)

---

### Shiplight vs testRigor: Intent-Based Testing Compared

- URL: https://www.shiplight.ai/blog/shiplight-vs-testrigor

- Published: 2026-03-31

- Author: Shiplight AI Team

- Categories: Guides

- Markdown: https://www.shiplight.ai/api/blog/shiplight-vs-testrigor/raw

Both Shiplight and testRigor let you write tests without code — but they take fundamentally different approaches. Here's how they compare on test format, execution, pricing, and developer workflow.

Full article

Both Shiplight and testRigor promise the same thing: write end-to-end tests without code, and let AI handle the maintenance. Both use intent-based approaches instead of brittle DOM selectors. Both claim self-healing.

But they're built for different teams and different workflows. testRigor is designed for non-technical testers who want to write in plain English. Shiplight is designed for developers and engineering teams who build with AI coding agents and want tests in their repo.

We build Shiplight, so we have a perspective. This comparison is honest about where testRigor excels and where we think Shiplight is the better fit.

## Quick Comparison

| Feature | Shiplight | testRigor |

|---------|-----------|-----------|

| **Test format** | YAML files in your git repo (also runs in Shiplight Cloud) | Plain English (only in testRigor's cloud) |

| **Target user** | Developers, QA engineers, AI-native teams | Non-technical testers, manual QA teams |

| **Shiplight Plugin** | Yes (Claude Code, Cursor, Codex) | No |

| **Self-healing** | Intent-based + cached locators | AI-based with plain English re-interpretation |

| **Browser support** | All Playwright browsers (Chrome, Firefox, Safari) | 2,000+ browser combinations |

| **Mobile testing** | Web-focused | iOS, Android, web |

| **Desktop testing** | No | Yes |

| **API testing** | Via inline JavaScript | Built-in |

| **Test ownership** | Your repo + optional cloud execution | testRigor's cloud only (no export) |

| **CI/CD** | CLI runs anywhere Node.js runs | Built-in CI integration |

| **Pricing** | Contact (Plugin free) | From $300/month (3 machines minimum) |

| **Enterprise security** | SOC 2 Type II, VPC, audit logs | SOC 2 Type II |

| **Test stability claim** | Near-zero maintenance | 95% less maintenance vs. traditional tools |

## How They Work — Side by Side

### testRigor: Plain English Testing

testRigor's core idea is that tests should be written from the end user's perspective in plain English. No selectors, no code, no framework knowledge.

A testRigor test looks like this:

```

login

click "New Project"

check that page contains "Project created successfully"

enter "My Project" into "Project Name"

click "Save"

check that page contains "My Project"

```

The platform interprets these instructions at runtime using AI and a proprietary language engine. It supports over 2,000 browser combinations, mobile apps (iOS and Android), desktop applications, and API testing.

**Strengths:**

- Lowest barrier to entry for non-technical users

- Broad platform coverage (web, mobile, desktop, API)

- 2,000+ browser combinations

- AI-powered test generation from recordings or descriptions

- Tests require 95% less maintenance than Selenium-based alternatives

**Trade-offs:**

- Tests exist only in testRigor's cloud — no repo copy, no export

- Plain English syntax still has conventions to learn

- Limited granular control for complex test scenarios

- Less developer-oriented than code-based or YAML-based tools

- Pricing starts at $300/month with 3-machine minimum

### Shiplight: YAML Intent Testing in Your Repo

Shiplight takes a different approach. Tests are YAML files with natural language intent statements combined with Playwright-compatible locators. They live in your git repo, are reviewable in PRs, and run anywhere Node.js runs.

A Shiplight test looks like this:

```yaml

goal: Verify user can create a new project

statements:

- intent: Log in as a test user

- intent: Navigate to the dashboard

- intent: Click "New Project" in the sidebar

- intent: Enter "My Project" in the project name field

- intent: Click the Save button

- VERIFY: the project appears in the project list

```

Shiplight's [MCP server](https://www.shiplight.ai/plugins) connects directly to AI coding agents (Claude Code, Cursor, Codex), so the agent that builds a feature can also verify it in a real browser and generate the test automatically.

**Strengths:**

- Tests live in your repo (with Shiplight Cloud for managed execution) — version-controlled, reviewable in PRs

- Shiplight Plugin with AI coding agents

- Self-healing via intent + cached locators for deterministic speed

- Built on Playwright for cross-browser support

- YAML files are portable — you own your tests even with Shiplight Cloud

- [SOC 2 Type II certified](https://www.aicpa-cima.com/topic/audit-assurance/audit-and-assurance-greater-than-soc-2) with VPC deployment

**Trade-offs:**

- Web-focused (no native mobile or desktop testing)

- More developer-oriented — less accessible for non-technical testers

- Newer platform with a smaller community

- No self-serve pricing page

## The Core Difference: Who Writes the Tests?

Both tools are accessible without coding skills — but they're designed for different workflows.

**testRigor** uses free-form plain English ("click the Submit button"). This makes test authoring easy for non-technical users, but tests live exclusively in testRigor's cloud with no export.

**Shiplight** uses structured YAML with natural language intent. PMs, designers, and QA can all read and review Shiplight tests — but the tests also live in your git repo, run in CI, and integrate directly with AI coding agents via [Shiplight Plugin](https://www.shiplight.ai/plugins). This makes Shiplight the better fit for teams where developers and AI agents are part of the testing workflow, while still being readable by the whole team.

## Test Ownership and Portability

### testRigor

Tests are created and stored exclusively in testRigor's cloud platform. You write them in testRigor's interface, and they execute on testRigor's infrastructure. There is no local copy and no export — the plain English format is proprietary to testRigor's interpreter. If you switch tools, you start over.

### Shiplight

Tests are YAML files committed to your repository — the source of truth lives in git, not in a vendor's cloud. Shiplight Cloud provides managed execution, dashboards, scheduling, and AI-powered failure analysis on top of those same repo-based tests. You get the benefits of a cloud platform (managed infrastructure, team visibility, historical trends) without giving up ownership of your test assets.

**Why this matters:** Both tools have cloud platforms. The difference is where your tests live. With testRigor, tests exist only in their cloud — no repo copy, no export, no portability. With Shiplight, tests are YAML files in your repo that also run in the cloud. If you leave Shiplight, your test specs stay with you.

## Pricing

### testRigor

testRigor starts at approximately $300/month with a minimum of 3 virtual machines. All tiers include unlimited test cases and unlimited users. As test suites grow, additional machines can be added to reduce execution time. This per-machine pricing can scale significantly for large test suites running frequently.

### Shiplight

[Shiplight Plugin is free](https://www.shiplight.ai/plugins) with no account required — AI coding agents can start verifying and generating tests immediately. Platform pricing (cloud execution, dashboards, scheduled runs) requires contacting sales. [Enterprise](https://www.shiplight.ai/enterprise) includes SOC 2 Type II, VPC deployment, RBAC, and 99.99% SLA.

**Honest assessment:** testRigor wins on pricing transparency — you know what you'll pay before talking to sales. Shiplight's free Shiplight Plugin is a strong entry point, but platform pricing requires a conversation.

## When testRigor May Fit

testRigor may be a fit if:

- **Non-technical testers own QA.** If your testing team doesn't code and shouldn't have to, testRigor's plain English approach has the lowest barrier to entry.

- **You need mobile and desktop testing.** testRigor supports iOS, Android, and desktop apps. Shiplight is web-focused.

- **You want broad browser coverage.** testRigor offers 2,000+ browser combinations out of the box.

- **You need API testing built in.** testRigor includes API testing natively. Shiplight handles APIs via inline JavaScript in YAML tests.

- **You want transparent pricing.** testRigor publishes plans and pricing. Shiplight requires contacting sales.

## When to Choose Shiplight

Shiplight is the better fit when:

- **You build with AI coding agents.** [Shiplight Plugin](https://www.shiplight.ai/plugins) connects to Claude Code, Cursor, and Codex — the agent verifies its own work in a real browser during development.

- **You want tests in your repo.** [YAML test files](https://www.shiplight.ai/yaml-tests) live alongside your code, are version-controlled, produce clean diffs, and are reviewable in PRs.

- **Developers own testing.** If engineers are writing and reviewing tests, YAML in git is a natural fit. Plain English in a separate platform adds context-switching.

- **You need enterprise security.** SOC 2 Type II, VPC deployment, immutable audit logs, RBAC, and 99.99% SLA are available. testRigor offers SOC 2 but fewer deployment options.

- **You want no vendor lock-in.** YAML specs are portable. testRigor's tests exist only in their cloud with no export.

- **You need cross-browser with Playwright.** Shiplight runs on Playwright, supporting Chrome, Firefox, and Safari/WebKit. testRigor has broader combinations but uses its own execution engine.

## Frequently Asked Questions

### Can testRigor tests be exported?

No. testRigor tests are written in the platform's proprietary plain English format and executed by testRigor's engine. They cannot be exported as Playwright, Cypress, or Selenium scripts. If you leave testRigor, you'd need to recreate tests in your new tool.

### Does Shiplight support plain English testing?

Shiplight uses YAML with natural language intent statements rather than free-form plain English. The format is structured (intent + action + locator) which makes it deterministic and reviewable, but it requires slightly more structure than testRigor's conversational syntax.

### Which tool has better self-healing?

Both use AI to handle UI changes. testRigor re-interprets plain English instructions on each run. Shiplight uses cached locators for speed and falls back to AI intent resolution when locators break — a two-speed approach that's faster for stable UIs but equally adaptive when things change.

### Can I use both tools together?

In theory, yes — testRigor for mobile/desktop testing and Shiplight for web E2E integrated with AI coding agents. In practice, most teams choose one primary tool to avoid maintaining two test ecosystems.

### What is intent-based testing?

Intent-based testing describes what a test should verify in natural language rather than how to interact with specific DOM elements. Both Shiplight and testRigor use this approach, but implement it differently — testRigor with free-form English, Shiplight with structured YAML intent statements.

## Final Verdict

testRigor and Shiplight solve the same problem — brittle, high-maintenance E2E tests — but for different teams.

testRigor may fit teams where non-technical testers own QA and mobile/desktop coverage is required. However, it comes with vendor lock-in (no test export) and higher costs ($300+/month).

**Shiplight is the stronger choice** for teams where developers and AI coding agents drive the workflow. Tests live in your repo, self-heal automatically, and integrate directly into your coding agent via [Shiplight Plugin](https://www.shiplight.ai/plugins) — with enterprise-grade security and no vendor lock-in. [Book a demo](https://www.shiplight.ai/demo) to see the difference.

## Get Started

- [Try Shiplight Plugin — free, no account needed](https://www.shiplight.ai/plugins)

- [Book a demo](https://www.shiplight.ai/demo)

- [YAML Test Format](https://www.shiplight.ai/yaml-tests)

- [Best AI Testing Tools in 2026](https://www.shiplight.ai/blog/best-ai-testing-tools-2026)

- [Documentation](https://docs.shiplight.ai)

References: [Playwright Documentation](https://playwright.dev), [SOC 2 Type II standard](https://www.aicpa-cima.com/topic/audit-assurance/audit-and-assurance-greater-than-soc-2), [Google Testing Blog](https://testing.googleblog.com/)

---

### From Human-First to Agent-First Testing: What a Year of Building Taught Us

- URL: https://www.shiplight.ai/blog/from-nocode-to-ai-native-testing

- Published: 2026-03-25

- Author: Feng

- Categories: Engineering

- Markdown: https://www.shiplight.ai/api/blog/from-nocode-to-ai-native-testing/raw

We built a cloud-based testing platform for humans. Then AI coding agents changed everything. Here's what we learned building a second product for agent-first workflows.

Full article

[Shiplight Cloud](https://docs.shiplight.ai/cloud/quickstart.html) is a fully-managed, cloud-based natural language testing platform designed to multiply human productivity. Teams author tests visually, the platform handles execution, and results are managed in the cloud. It continues to serve teams that need managed test authoring and execution.

By late 2025, the landscape around us shifted in ways that called for a different product:

- **AI coding agents took off.** They generate testing scripts fast, but the output is hard to review and expensive to maintain. The volume of tests grows, but confidence does not.

- **Roles are collapsing.** The PM → engineer → QA handoff is dissolving. A single person increasingly defines, builds, and verifies with AI. Quality is no longer a separate phase.

- **Specs are becoming the source of truth.** With AI generating code from intent, the canonical representation of product behavior moves upstream from code to structured natural language.

In addition to **Shiplight Cloud**, we built [Shiplight Plugins](https://docs.shiplight.ai/getting-started/quick-start.html) as a new product for developers and automation engineers who work with AI agents. The core principle: AI handles test creation, execution, and maintenance, while the system produces clear evidence at every step for humans to understand and trust.

### Design Goals

1. **Tight feedback loop for AI agents.** AI coding agents produce better results when they get clear, immediate feedback. Verification should happen during development, not after.

2. **Spec-driven.** Tests should read like product specs, not implementation code. Anyone on the team can review what is being tested without technical expertise.

3. **Auto-healing.** Cosmetic and structural UI changes should not break tests as long as the product behavior is unchanged.

4. **Human-readable evidence.** When tests pass or fail, the result should be understandable by anyone on the team without reading code or stack traces.

5. **Performant.** Tests should be fast and repeatable by default. Deterministic replay where possible, AI resolution only when needed.

6. **No new platform to learn.** Extend the tools and workflows developers already use rather than introducing a new system to adopt.

## How **Shiplight Plugins** Works

Here's how this comes together in practice.

### Shiplight Browser MCP Server

Any MCP-compatible coding agent connects to the Shiplight browser MCP server, gaining the ability to open a browser, navigate the app, interact with elements, take screenshots, and observe network activity.

It goes beyond launching a fresh browser: attach to an existing Chrome DevTools URL to test against a running dev environment with real data and authenticated state. A relay server supports remote and headless setups.

The AI agent navigates the application as a human would, producing a structured test as output.

### Tests Are Natural Language, Not Code

We designed Shiplight tests around natural language in YAML format to solve the readability and maintenance problems with AI-generated [Playwright](https://playwright.dev/) scripts:

```yaml

goal: Verify that a user can log in and create a new project

base_url: https://your-app.com

statements:

- URL: /login

- intent: Enter email address

action: input_text

locator: "getByPlaceholder('Email')"

text: "{{TEST_EMAIL}}"

- intent: Enter the password

action: input_text

locator: "getByPlaceholder('Password')"

text: "{{TEST_PASSWORD}}"

- intent: Click Sign In

action: click

locator: "getByRole('button', { name: 'Sign In' })"

- VERIFY: The dashboard is visible with a welcome message

- intent: Click "New Project" in the sidebar

action: click

locator: "getByRole('link', { name: 'New Project' })"

- VERIFY: The project creation form is displayed

```

Each test describes the flow in human terms, following [web testing best practices](https://testing.googleblog.com/) that emphasize clarity and maintainability. The same person who specified the feature can review the test without understanding test code. Files live in the repo, are reviewed in PRs, and produce clean diffs. Intent-based steps resolve via AI at runtime or use cached locators for deterministic replay. Custom logic (API calls, database queries, setup) embeds inline as JavaScript.

### Run, Debug, and Get Reports with the CLI

`shiplight test` runs tests locally. `shiplight debug` opens an interactive debugger to step through tests one statement at a time, inspect browser state, and edit steps in place.

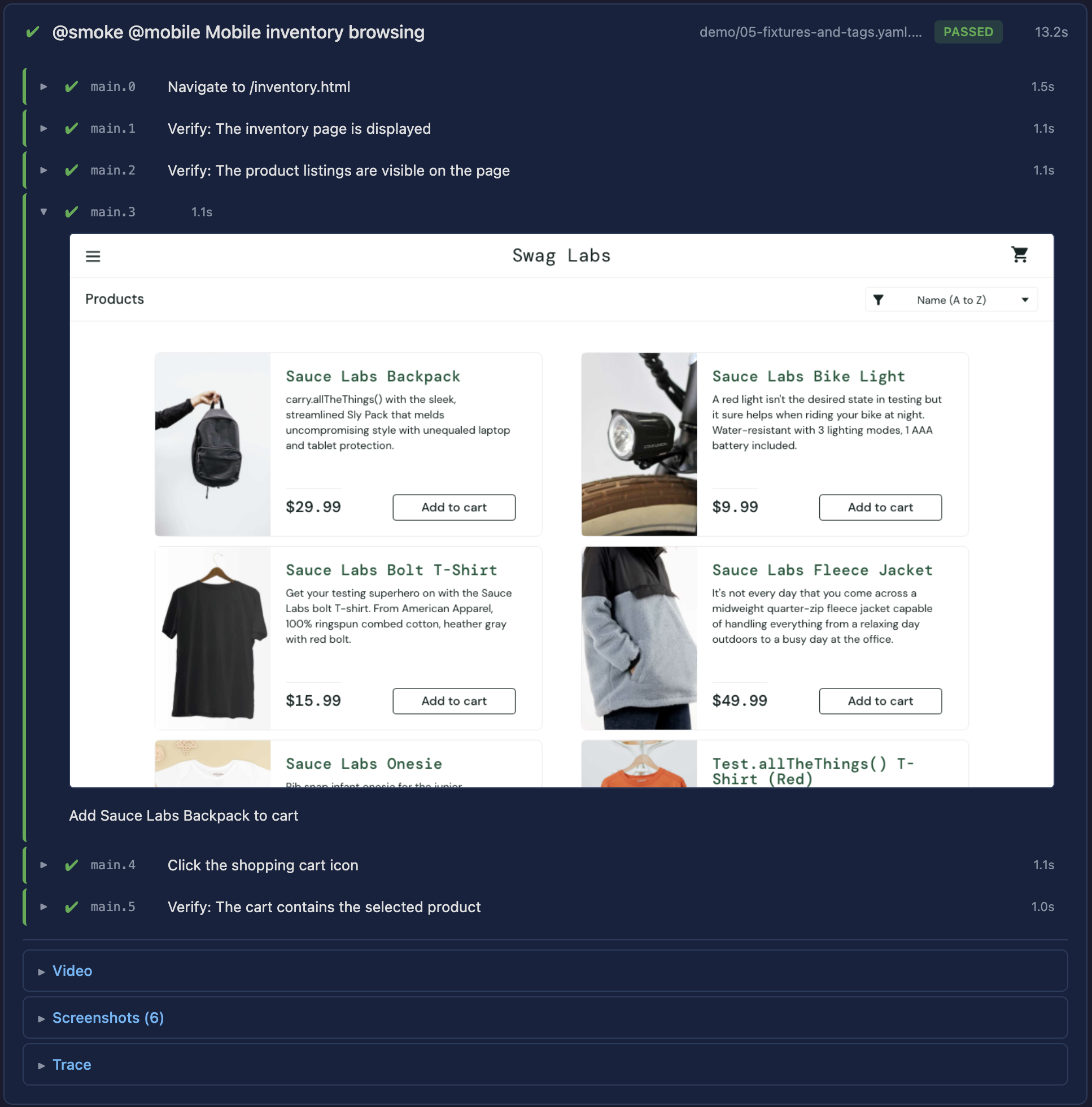

After a run, Shiplight generates an HTML report. We retained the best of [Playwright](https://playwright.dev/) (video recording, trace data) and addressed what was lacking. Instead of cryptic selectors and programmatic steps, reports show natural language steps paired with screenshots.

On failure: a screenshot of the actual page state, the expected behavior, and an AI-generated explanation. For example, "Expected a welcome message, but the page displays 'Session Expired'." Readable by anyone on the team without code context.

### Drop Into Your Existing Workflow

Tests are YAML files in the repo. The CLI runs anywhere Node.js runs. GitHub Actions, GitLab CI, CircleCI require minimal configuration: add a step and point it at the test directory.

**Shiplight Cloud** features (scheduled runs, team dashboards, historical trends, hosted reports) are available when needed. But the core loop works entirely with the CLI and existing CI. No lock-in.

## What's Next

A year ago we built a platform to help humans test more productively. Now we are building for a world where one person, operating AI, designs, builds, and verifies a feature in a single session.

The role of testing is not disappearing — it is shifting. The tooling needs to reflect that: verification integrated into the development flow, evidence clear enough to trust without re-doing the work, and tests that maintain themselves as the product evolves.

We are building Shiplight to be that layer.

### Key Takeaways

- **Verify in a real browser during development.** Shiplight's MCP server lets AI coding agents open a browser and validate UI changes before code review — not after deployment.

- **Generate stable regression tests automatically.** Verifications become YAML test files in your repo, building regression coverage as a byproduct of development.

- **Reduce maintenance with AI-driven self-healing.** Intent-based test steps adapt to UI changes automatically. Cached locators keep execution fast; AI resolves only when needed.

- **Enterprise-ready security and deployment.** [SOC 2 Type II](https://www.aicpa-cima.com/topic/audit-assurance/audit-and-assurance-greater-than-soc-2) certified, encrypted data, role-based access, immutable audit logs, and a 99.99% uptime SLA.

- [Quick Start guide](https://docs.shiplight.ai/getting-started/quick-start.html)

- [YAML Test Language Spec](https://github.com/ShiplightAI/examples/blob/main/yaml-examples/YAML-TEST-LANGUAGE-SPEC.md)

- [Shiplight Plugins overview](https://www.shiplight.ai/plugins)

---

### A 30-Day Playbook for Replacing Manual Regression with Agentic E2E Testing

- URL: https://www.shiplight.ai/blog/30-day-agentic-e2e-playbook

- Published: 2026-03-25

- Author: Shiplight AI Team

- Categories: Engineering, Enterprise, Guides, Best Practices

- Markdown: https://www.shiplight.ai/api/blog/30-day-agentic-e2e-playbook/raw

Manual regression testing rarely fails because teams do not care about quality. It fails because it does not scale with product velocity. The moment your UI, permissions, and integrations start changing weekly, the regression checklist becomes a second product that nobody has time to maintain.

Full article

Manual regression testing rarely fails because teams do not care about quality. It fails because it does not scale with product velocity. The test automation ROI case is straightforward: teams that shift from manual regression to automated coverage reduce testing costs by 60-80% while catching regressions earlier — a shift-left testing approach that prevents bugs from reaching staging. The moment your UI, permissions, and integrations start changing weekly, the regression checklist becomes a second product that nobody has time to maintain.

Agentic QA changes the operating model. Instead of treating end-to-end testing as brittle scripts owned by a small QA group, you build intent-based coverage that is readable, reviewable, and resilient as the application evolves. Shiplight AI is designed for exactly that: autonomous agents and no-code tools that help teams scale end-to-end test coverage with near-zero maintenance.

Below is a practical 30-day rollout plan that engineering leaders and QA owners can use to modernize E2E coverage without slowing delivery.

## The goal: make regression a product capability, not a hero effort

A modern regression system has three outcomes:

1. **Coverage grows as the product grows.** New features ship with tests as a default behavior, not a special project.

2. **Failures are actionable.** When something breaks, the team can localize the issue quickly and decide whether it is a product regression or a test that needs adjustment.

3. **Maintenance stays bounded.** UI changes should not trigger a constant rewrite cycle.

Shiplight’s approach starts with tests expressed as *user intent*, then executes them on top of Playwright for speed and reliability, adding an AI layer to reduce brittleness.

## Week 1: Pick the “thin slice” journeys that actually gate releases

Most teams try to automate everything at once. That is how automation initiatives stall. Instead, choose 5 to 10 **mission-critical user journeys** that represent real release risk. Examples:

- Sign up, login, password reset

- Checkout or payment flow

- Role-based access paths (admin vs. member)

- A primary workflow that spans multiple pages and services

Shiplight is built to let teams create tests from natural language, which is useful here because it forces you to define the journey in business terms first.

**Deliverable at the end of Week 1:** a short, shared “release gate list” of journeys with owners and success criteria.

## Week 2: Author readable intent-first tests, then optimize the steps that matter

Shiplight supports YAML test flows written in natural language, designed to stay readable for human review while still running as standard Playwright under the hood.

A minimal test has a goal and a list of statements:

```yaml

goal: Verify user journey

statements:

- intent: Navigate to the application

- intent: Perform the user action

- VERIFY: the expected result

```

In Shiplight’s model, **locators are a cache**. You can start with natural language for clarity, then enrich steps with deterministic locators for speed. If the UI changes, Shiplight can fall back to the natural-language description to find the right element and recover.

In the Test Editor, steps can run in **Fast Mode** (cached selectors, performance-optimized) or **AI Mode** (dynamic evaluation, adaptability). The right pattern for most teams is:

- Use AI Mode for rapid authoring and for steps that commonly shift.

- Convert stable, high-frequency steps to Fast Mode to optimize execution time.

- Keep assertions intent-based so failures stay meaningful.

**Deliverable at the end of Week 2:** your thin-slice journeys automated end to end, readable enough to review in a PR, and stable enough to run repeatedly.

## Week 3: Make tests part of the PR and deployment workflow

Coverage only matters if it runs where decisions get made. Shiplight provides a GitHub Actions integration that runs test suites using a Shiplight API token and suite IDs, and can comment results back on pull requests.

This is the week to introduce two quality gates:

1. **PR gate for critical journeys** (fast feedback, smaller scope)

2. **Scheduled regression gate** (broader coverage, runs daily or pre-release)

If you use preview environments, configure the workflow to pass the preview URL so tests validate the exact artifact under review.

**Deliverable at the end of Week 3:** E2E results are visible in the same place engineers work, and regressions surface before merge, not after release.

## Week 4: Reduce flaky toil with auto-healing and operationalize ownership

UI tests break for two reasons: product regressions and UI drift. A modern system handles both without wasting engineering cycles.

Shiplight’s Test Editor includes **auto-healing behavior**: when a Fast Mode action fails, it can retry in AI Mode to dynamically identify the correct element. In the editor, that change is visible and can be saved or reverted. In cloud execution, it can recover without modifying the test configuration.

At this stage, define ownership and triage rules:

- **Owners by journey**, not by test file

- **A weekly review** of failures: what was real, what was drift, what should become a stronger assertion

- **A standard for test intent**: step descriptions should read like user behavior, not DOM details

If your critical journeys include email verification or magic links, Shiplight also supports email content extraction as part of a test flow, with extracted results stored in variables you can use in subsequent steps.

**Deliverable at the end of Week 4:** fewer “false red builds,” clearer diagnostics, and a steady cadence for expanding coverage beyond the initial thin slice.

## What “enterprise-ready” means in practice

If you operate in a regulated environment, E2E testing needs to meet the same standards as the rest of your tooling. Shiplight positions its enterprise offering around SOC 2 Type II certification and controls like encryption in transit and at rest, role-based access control, and immutable audit logs. It also supports private cloud and VPC deployments and provides a 99.99% uptime SLA.

That matters because quality tooling becomes part of your delivery chain. It needs to be trustworthy, observable, and auditable.

## The takeaway: start small, make it real, then scale

The fastest way to modernize QA is not a grand rewrite. It is a rollout that:

- Automates the journeys that gate releases

- Keeps tests readable in intent-first language

- Optimizes execution where it matters

- Integrates results directly into PR and CI workflows

- Uses auto-healing to keep maintenance bounded

Shiplight’s core promise is simple: ship faster without breaking what users depend on, by letting autonomous agents and practical tooling do the heavy lifting of E2E coverage and upkeep.

## Related Articles

- [intent-cache-heal pattern](https://www.shiplight.ai/blog/intent-cache-heal-pattern)

- [best AI testing tools in 2026](https://www.shiplight.ai/blog/best-ai-testing-tools-2026)

- [PR-ready E2E tests](https://www.shiplight.ai/blog/pr-ready-e2e-test)

## Key Takeaways

- **Verify in a real browser during development.** Shiplight Plugin lets AI coding agents validate UI changes before code review.

- **Generate stable regression tests automatically.** Verifications become YAML test files that self-heal when the UI changes.

- **Reduce maintenance with AI-driven self-healing.** Cached locators keep execution fast; AI resolves only when the UI has changed.

- **Integrate E2E testing into CI/CD as a quality gate.** Tests run on every PR, catching regressions before they reach staging.

## Frequently Asked Questions

### What is AI-native E2E testing?

AI-native E2E testing uses AI agents to create, execute, and maintain browser tests automatically. Unlike traditional test automation that requires manual scripting, AI-native tools like Shiplight interpret natural language intent and self-heal when the UI changes.

### How do self-healing tests work?

Self-healing tests use AI to adapt when UI elements change. Shiplight uses an intent-cache-heal pattern: cached locators provide deterministic speed, and AI resolution kicks in only when a cached locator fails — combining speed with resilience.

### How do you test email and authentication flows end-to-end?

Shiplight supports testing full user journeys including login flows and email-driven workflows. Tests can interact with real inboxes and authentication systems, verifying the complete path from UI to inbox.

### How does E2E testing integrate with CI/CD pipelines?

Shiplight's CLI runs anywhere Node.js runs. Add a single step to GitHub Actions, GitLab CI, or CircleCI — tests execute on every PR or merge, acting as a quality gate before deployment.

## Get Started

- [Try Shiplight Plugin](https://www.shiplight.ai/plugins)

- [Book a demo](https://www.shiplight.ai/demo)

- [YAML Test Format](https://www.shiplight.ai/yaml-tests)

- [Enterprise features](https://www.shiplight.ai/enterprise)

References: [Playwright Documentation](https://playwright.dev), [SOC 2 Type II standard](https://www.aicpa-cima.com/topic/audit-assurance/audit-and-assurance-greater-than-soc-2), [GitHub Actions documentation](https://docs.github.com/en/actions), [Google Testing Blog](https://testing.googleblog.com/)

---

### How to Make E2E Failures Actionable: A Modern Debugging Playbook (With Shiplight AI)

- URL: https://www.shiplight.ai/blog/actionable-e2e-failures

- Published: 2026-03-25

- Author: Shiplight AI Team

- Categories: Engineering, Enterprise, Guides, Best Practices

- Markdown: https://www.shiplight.ai/api/blog/actionable-e2e-failures/raw

End-to-end testing rarely fails because teams do not care about quality. It fails because the feedback loop is broken.

Full article

End-to-end testing rarely fails because teams do not care about quality. It fails because the feedback loop is broken.

A flaky UI test that sometimes passes is not just inconvenient. It is expensive. It trains engineers to ignore red builds, bloats CI time, and turns releases into a negotiation: “Do we trust the failure, or do we ship anyway?”

This post is a practical playbook for turning E2E failures into *actionable signal*. Not “more tests,” not “more dashboards,” not “more heroics.” Just a system that answers three questions fast:

1. **What broke?**

2. **Where did it break?**

3. **What should we do next?**

Shiplight AI is built around that exact loop, from intent-first test authoring to AI-assisted triage and debugging across local, cloud, and CI workflows.

## 1) Start with intent that humans can read (and review)

Actionable failures begin with readable tests. If your test suite is a pile of brittle selectors and framework-specific abstractions, your failures will be brittle too.

Shiplight tests can be written in YAML using natural language statements, including explicit `VERIFY:` assertions. That makes tests reviewable by the whole team, not only the person who wrote the automation.

Here is the basic structure Shiplight documents:

```yaml

goal: Verify user journey

statements:

- intent: Navigate to the application

- intent: Perform the user action

- VERIFY: the expected result

```

In practice, this does something subtle but important: it makes a failure legible. When a test fails, you do not need to reverse-engineer intent from implementation details.

## 2) Make execution fast without making it fragile

Debugging gets painful when every run takes 20 minutes. But speed often comes at a cost: tests become tightly coupled to DOM structure and UI implementation details.

Shiplight’s approach is a hybrid:

- **Natural language steps** can be resolved at runtime by an agent that “looks at the page” and decides what to do.

- Tests can also be **enriched** with explicit Playwright locators for deterministic replay.

- Those locators act as a **cache**, not a hard dependency. If the UI shifts, Shiplight can fall back to the natural language description and recover.

Shiplight also documents that the YAML layer is an authoring layer, and the underlying runner is Playwright with an AI agent on top.

That matters for actionability because it reduces the two biggest E2E taxes:

- The tax of slow feedback

- The tax of constant maintenance after UI changes

## 3) When something breaks, capture evidence that engineers can use

Most E2E tooling fails the moment a test goes red. It gives you a stack trace and a screenshot, then walks away.

Shiplight’s Test Editor includes a debugging workflow designed for investigation, not just execution: step-by-step mode, partial execution, rollback, and a Live View panel with a screenshot gallery, console output, and test context (including variables).

This matters because actionability is not only “why did it fail,” but “can I reproduce it and prove the fix?” A debugger that supports stepping, previewing, and iterating shortens that loop.

## 4) Reduce triage time with AI summaries that point to root cause

Even with good debugging tools, triage time becomes a bottleneck when failures stack up across suites and environments.

Shiplight’s **AI Test Summary** is designed to compress investigation by analyzing failed runs and producing a structured explanation, including root cause analysis, expected vs actual behavior, recommendations, and tagging. The documentation also notes visual context analysis using screenshots.

The goal is not to replace engineering judgment. It is to make the first pass faster, so the team spends time fixing, not deciphering.

## 5) Put actionability where it belongs: in the pull request workflow

E2E tests are most valuable when they act as a release gate, not a nightly report nobody reads.

Shiplight provides a GitHub Actions integration that runs suites from CI using a Shiplight API token and suite and environment IDs. The documented example uses `ShiplightAI/github-action@v1`, supports running on pull requests, and can be configured to comment results back on PRs.

That flow matters because it turns “we should test this” into “this change ships with proof.”

Separately, Shiplight’s results UI is organized around the concept of a *run* as a specific execution of a suite, making it straightforward to review historical executions and filter what you are looking at.

## 6) Test the workflows users actually experience (including email)

For many products, the most failure-prone journeys are not just UI clicks. They are workflows like password resets, magic links, and verification codes.

Shiplight documents an **Email Content Extraction** feature that can read incoming emails and extract verification codes, activation links, or custom content using an LLM-based extractor, without regex-heavy parsing.

For teams trying to build realistic E2E coverage, that is the difference between “we tested the happy path” and “we tested the whole journey.”

## 7) Enterprise readiness: security and deployment options

Quality tooling touches sensitive surfaces: credentials, production-like environments, and mission-critical workflows. Shiplight positions its enterprise offering around SOC 2 Type II certification, encryption in transit and at rest, role-based access control, immutable audit logs, and a 99.99% uptime SLA, along with private cloud and VPC deployment options.

(For legal and corporate context, Shiplight’s Terms identify the company as Loggia AI, Inc. doing business as Shiplight AI.)

## Where to start

If your team wants more reliable releases without adding a maintenance burden, start with one principle: **every failure must pay for itself with clear next steps**.

Shiplight’s workflow is built to make that practical: intent-first tests, Playwright-based execution, self-healing locator caching, deep debugging tools, AI summaries, and CI integrations that bring results back to the PR.

When you are ready, Shiplight’s team offers demos directly from the site.

## Related Articles

- [intent-cache-heal pattern](https://www.shiplight.ai/blog/intent-cache-heal-pattern)

- [modern E2E workflow](https://www.shiplight.ai/blog/modern-e2e-workflow)

- [TestOps playbook](https://www.shiplight.ai/blog/testops-playbook)

## Key Takeaways

- **Generate stable regression tests automatically.** Verifications become YAML test files that self-heal when the UI changes.

- **Reduce maintenance with AI-driven self-healing.** Cached locators keep execution fast; AI resolves only when the UI has changed.

- **Integrate E2E testing into CI/CD as a quality gate.** Tests run on every PR, catching regressions before they reach staging.

- **Enterprise-ready security and deployment.** SOC 2 Type II certified, encrypted data, RBAC, audit logs, and a 99.99% uptime SLA.

## Frequently Asked Questions

### How do self-healing tests work?

Self-healing tests use AI to adapt when UI elements change. Shiplight uses an intent-cache-heal pattern: cached locators provide deterministic speed, and AI resolution kicks in only when a cached locator fails — combining speed with resilience.

### How do you test email and authentication flows end-to-end?

Shiplight supports testing full user journeys including login flows and email-driven workflows. Tests can interact with real inboxes and authentication systems, verifying the complete path from UI to inbox.

### How does E2E testing integrate with CI/CD pipelines?

Shiplight's CLI runs anywhere Node.js runs. Add a single step to GitHub Actions, GitLab CI, or CircleCI — tests execute on every PR or merge, acting as a quality gate before deployment.

### Is Shiplight enterprise-ready?

Yes. Shiplight is SOC 2 Type II certified with encrypted data in transit and at rest, role-based access control, immutable audit logs, and a 99.99% uptime SLA. Private cloud and VPC deployment options are available.

## Get Started

- [Try Shiplight Plugin](https://www.shiplight.ai/plugins)

- [Book a demo](https://www.shiplight.ai/demo)

- [YAML Test Format](https://www.shiplight.ai/yaml-tests)

- [Enterprise features](https://www.shiplight.ai/enterprise)

References: [Playwright Documentation](https://playwright.dev), [SOC 2 Type II standard](https://www.aicpa-cima.com/topic/audit-assurance/audit-and-assurance-greater-than-soc-2), [GitHub Actions documentation](https://docs.github.com/en/actions), [Google Testing Blog](https://testing.googleblog.com/)

---

### The Practical Buyer’s Guide to AI-Native E2E Testing (and What Shiplight AI Gets Right)

- URL: https://www.shiplight.ai/blog/ai-native-e2e-buyers-guide

- Published: 2026-03-25

- Author: Shiplight AI Team

- Categories: Engineering, Enterprise, Guides, Best Practices

- Markdown: https://www.shiplight.ai/api/blog/ai-native-e2e-buyers-guide/raw

Modern release velocity has broken the old QA contract.

Full article

Modern release velocity has broken the old QA contract.

Teams ship UI changes daily. AI coding agents can generate large diffs in minutes. Meanwhile, traditional end-to-end automation still tends to fail in the same two places: it is slow to author, and expensive to maintain once the UI inevitably shifts.

That gap is exactly where "AI-native testing" should help. In practice, many tools stop at test generation and leave teams with the same operational burden: brittle selectors, flaky assertions, and debugging workflows that pull engineers out of flow.

If you are evaluating an AI-powered E2E platform, here is a practical checklist of capabilities that matter in production, plus how Shiplight AI approaches each one.

## 1) Verification has to live where code is written, not after it ships

The biggest shift is not "AI writes tests." It is "verification happens inside the development loop."

Shiplight is built to connect directly to AI coding agents via [Shiplight Plugin](https://www.shiplight.ai/plugins), so your agent can open a real browser, validate a change, and then turn that verification into durable regression coverage. The goal is simple: catch issues before review and merge, not after release.

**What to look for:** tight feedback loops, browser-based verification (not screenshots alone), and a workflow that does not require a separate QA handoff.

## 2) Tests should be readable enough to review, but grounded enough to run deterministically

If E2E coverage is going to scale across a team, test intent needs to be understandable by more than the one person who wrote the script six months ago.

Shiplight’s local workflow uses YAML test flows written in natural language, with a clear structure: a `goal`, a starting `url`, and a list of `statements` that read like user intent. The same YAML tests can run locally with Playwright, using `npx playwright test`, alongside existing `.test.ts` files.

A simple example looks like this:

```yaml

goal: Verify user journey

statements:

- intent: Navigate to the application

- intent: Perform the user action

- VERIFY: the expected result

```

**What to look for:** a format that stays human-reviewable in PRs, but does not rely on "best-effort AI" for every step on every run.

## 3) Self-healing only matters if it preserves speed and determinism

Most teams do not mind a tool that can "figure it out" once. They mind a tool that has to "figure it out" every time.

Shiplight’s approach is pragmatic: locators can be treated as a performance cache. Tests can replay quickly using deterministic actions with explicit locators, but when the UI changes and a cached locator becomes stale, the agentic layer can fall back to the natural-language intent to find the right element.

This is also where Shiplight’s positioning around intent-based execution matters: the test is expressed as user intent, rather than being permanently coupled to brittle selectors.

**What to look for:** self-healing that reduces maintenance without turning every run into a slow, non-deterministic exploration.

## 4) The real "hard parts" of E2E are auth and email, so your platform should treat them as first-class

A surprising number of E2E programs fail not because clicking buttons is hard, but because the workflows are real.

Two examples:

### Authenticated apps

Shiplight’s MCP UI Verifier docs recommend a simple, production-friendly pattern: log in once manually, save session state, and let the agent reuse it so you do not re-authenticate on every verification run. Shiplight stores the state locally so future sessions can restore it.

### Email-driven flows

Shiplight also supports email content extraction for tests, designed to pull verification codes, activation links, or other structured content from incoming emails using an LLM-based extractor, without regex-heavy harnesses.

**What to look for:** explicit support for the flows you actually ship: SSO, 2FA, magic links, onboarding sequences, and transactional email.

## 5) Great tooling reduces context switching, not just test-writing time

Even strong automation fails if debugging is painful.

Shiplight supports a VS Code Extension designed to create, run, and debug `.test.yaml` files with an interactive visual debugger inside the editor. It is built to let you step through statements, inspect and edit action entities inline, and iterate quickly.

For teams that want a local, interactive environment without relying on cloud browser sessions, Shiplight also offers a native macOS desktop app that loads the Shiplight web UI while running the browser sandbox and AI agent worker locally.

**What to look for:** fast local iteration, IDE-native workflows, and debugging that feels like engineering, not archaeology.

## 6) CI integration is table stakes; actionable signal is the differentiator

A testing platform is only as valuable as the signal it produces when something breaks.

Shiplight Cloud includes test management and execution capabilities, and it integrates with CI, including a documented GitHub Actions integration that uses API tokens, suite and environment IDs, and standard GitHub secrets.

When failures happen, Shiplight’s AI Test Summary is designed to analyze failed results and produce root-cause identification, human-readable explanations, and visual context analysis based on screenshots.

**What to look for:** failure output that shortens time to diagnosis, not just a red build badge and a screenshot dump.

## 7) Enterprise readiness should be explicit, not implied

If E2E testing touches production-like data, credentials, or regulated workflows, "security later" is not a plan.

Shiplight positions its enterprise offering around SOC 2 Type II certification, encryption in transit and at rest, role-based access control, and immutable audit logs. It also lists a 99.99% uptime SLA and supports integrations across CI and common collaboration tools.

**What to look for:** clear compliance posture, access controls, auditability, and an availability story that matches how mission-critical E2E becomes.

## A final way to think about it: the platform should scale with your velocity

The promise of AI-native development is speed. The risk is shipping regressions faster.

Shiplight’s core bet is that verification should be continuous, agent-compatible, and resilient by design: validate changes in a real browser during development, convert that work into regression coverage, and keep the suite stable as the UI evolves.

If your current E2E program feels like a maintenance tax, the right evaluation question is not "Can this tool generate tests?" It is: **"Can this tool keep tests valuable six months from now, when the product has changed?"**

## Related Articles

- [best AI testing tools compared](https://www.shiplight.ai/blog/best-ai-testing-tools-2026)

- [intent-cache-heal pattern](https://www.shiplight.ai/blog/intent-cache-heal-pattern)

- [Playwright alternatives for no-code testing](https://www.shiplight.ai/blog/playwright-alternatives-no-code-testing)

## Key Takeaways

- **Verify in a real browser during development.** Shiplight Plugin lets AI coding agents validate UI changes before code review.

- **Generate stable regression tests automatically.** Verifications become YAML test files that self-heal when the UI changes.

- **Reduce maintenance with AI-driven self-healing.** Cached locators keep execution fast; AI resolves only when the UI has changed.

- **Enterprise-ready security and deployment.** SOC 2 Type II certified, encrypted data, RBAC, audit logs, and a 99.99% uptime SLA.

## Frequently Asked Questions

### What is AI-native E2E testing?

AI-native E2E testing uses AI agents to create, execute, and maintain browser tests automatically. Unlike traditional test automation that requires manual scripting, AI-native tools like Shiplight interpret natural language intent and self-heal when the UI changes.

### How do self-healing tests work?

Self-healing tests use AI to adapt when UI elements change. Shiplight uses an intent-cache-heal pattern: cached locators provide deterministic speed, and AI resolution kicks in only when a cached locator fails — combining speed with resilience.

### What is MCP testing?

MCP (Model Context Protocol) lets AI coding agents connect to external tools. Shiplight Plugin enables agents in Claude Code, Cursor, or Codex to open a real browser, verify UI changes, and generate tests during development.

### How do you test email and authentication flows end-to-end?

Shiplight supports testing full user journeys including login flows and email-driven workflows. Tests can interact with real inboxes and authentication systems, verifying the complete path from UI to inbox.

## Get Started

- [Try Shiplight Plugin](https://www.shiplight.ai/plugins)

- [Book a demo](https://www.shiplight.ai/demo)

- [YAML Test Format](https://www.shiplight.ai/yaml-tests)

- [Enterprise features](https://www.shiplight.ai/enterprise)

References: [Playwright Documentation](https://playwright.dev), [SOC 2 Type II standard](https://www.aicpa-cima.com/topic/audit-assurance/audit-and-assurance-greater-than-soc-2), [Google Testing Blog](https://testing.googleblog.com/)

---

### The AI Coding Era Needs an AI-Native QA Loop (and How to Build One)

- URL: https://www.shiplight.ai/blog/ai-native-qa-loop

- Published: 2026-03-25

- Author: Shiplight AI Team

- Categories: Engineering, Guides, Best Practices

- Markdown: https://www.shiplight.ai/api/blog/ai-native-qa-loop/raw

AI coding agents have changed the shape of software delivery. Features ship faster, pull requests multiply, and UI changes happen continuously. But one thing has not magically sped up with the rest of the stack: confidence.

Full article

AI coding agents have changed the shape of software delivery. Features ship faster, pull requests multiply, and UI changes happen continuously. But one thing has not magically sped up with the rest of the stack: confidence.

Most teams still rely on a mix of unit tests, a handful of brittle end-to-end scripts, and human spot checks that happen when someone has time. That model breaks down when development velocity is no longer limited by humans writing code. It is limited by humans proving the code works.

Shiplight AI was built for this moment: agentic end-to-end testing that keeps up with AI-driven development. It connects to modern coding agents via [Shiplight Plugin](https://www.shiplight.ai/plugins), validates changes in a real browser, and turns those verifications into maintainable, intent-based tests that require near-zero maintenance.

This post outlines a practical, developer-friendly approach to building an AI-native QA loop, starting locally and scaling to CI and cloud execution.

## Why traditional E2E testing struggles at AI velocity

End-to-end testing has always been the “truth layer” for user journeys, but it comes with predictable failure modes:

- **Tests are hard to author and harder to maintain.** Most frameworks require scripting expertise and careful selector work.

- **Selectors do not survive product iteration.** UI refactors, renamed buttons, and layout changes routinely break tests even when the user journey still works.

- **Failures create noise instead of decisions.** A broken E2E run often produces logs, not diagnosis.

AI-assisted development amplifies each problem. When the UI evolves daily, test upkeep becomes a tax that grows with every release.

Shiplight’s approach is to keep tests expressed as **intent**, not implementation details, and to pair that with an autonomous layer that can verify behavior directly in a browser.

## What Shiplight is (in plain terms)

Shiplight is an agentic QA platform for end-to-end testing that:

- Runs on top of **Playwright**, with a natural-language layer above it.

- Lets teams create tests by describing user flows in **plain English**, then refine them visually.

- Uses **intent-based execution** and **self-healing** to stay resilient when UIs change.

- Offers multiple ways to adopt it, including:

- **Shiplight Plugin** for AI coding agents

- **Shiplight Cloud** for team-wide test management, scheduling, and reporting

- **AI SDK** to extend existing Playwright suites with AI-native stabilization

- A **Desktop App** with a local browser sandbox and bundled MCP server

- A **VS Code Extension** for visual debugging of YAML tests

You can even get started without handing over codebase access. Shiplight’s onboarding flow emphasizes starting from your application URL and a test account, then expanding coverage from there.

## The AI-native QA loop: Verify, codify, operationalize

### 1) Verify changes in a real browser, directly from your coding agent

The fastest way to close the confidence gap is to remove the “context switch” between coding and validation.

Shiplight’s Shiplight Plugin is designed to work with AI coding agents so the agent can implement a feature, open a browser, and verify the UI change as part of the same workflow. For example, Shiplight’s documentation includes a quick start path for adding the Shiplight Plugin to Claude Code, as well as configuration patterns for Cursor and Windsurf.

The key is not the tooling detail. It is the workflow shift:

- Your agent writes code.

- Your agent verifies behavior in a browser.

- Verification becomes repeatable coverage, not a one-time check.

This is where quality starts to scale with velocity instead of fighting it.

### 2) Turn verification into durable tests using YAML that stays readable

Shiplight tests can be written as YAML “test flows” using natural language statements. The format is designed to be readable in code review, approachable for non-specialists, and flexible enough for real-world journeys, including step groups, conditionals, loops, and teardown steps.

A minimal example looks like this:

```yaml

goal: Verify user journey

statements:

- intent: Navigate to the application

- intent: Perform the user action

- VERIFY: the expected result

```

When you want speed and determinism, Shiplight also supports “enriched” steps that include Playwright-style locators such as `getByRole(...)`. Importantly, Shiplight treats these locators as a **cache**, not a fragile dependency. If the UI changes and a cached locator goes stale, Shiplight can fall back to the natural language intent to recover.

That design choice matters because it means your tests are no longer hostage to DOM churn. Your suite stays aligned to user intent while execution remains fast when the cached path is valid.

### 3) Operationalize coverage in CI with real reporting and AI diagnosis

Once you have durable flows, the next challenge is operational: running the right suites, in the right environment, at the right time, with outputs your team can act on.

Shiplight Cloud adds the pieces teams typically have to assemble themselves:

- Test suite organization, environments, and scheduled runs

- Cloud execution and parallelism

- Dashboards, results history, and automated reporting

- AI-generated summaries of test results, including multimodal analysis when screenshots are available

For CI, Shiplight provides a GitHub Actions integration that can run one or many suites against a specific environment and report results back to the workflow.

When failures happen, Shiplight’s AI Summary is designed to turn “a wall of logs” into something closer to a diagnosis: what failed, where it failed, what the UI looked like at the failure point, and recommended next steps.

This is where E2E becomes a decision system, not just a gate.

## Choosing the right adoption path (without boiling the ocean)

Different teams adopt Shiplight from different starting points. A practical way to choose: