The QA Role in the AI Era: How Responsibilities, Skills, and Career Paths Are Changing in 2026

Shiplight AI Team

Updated on May 13, 2026

Shiplight AI Team

Updated on May 13, 2026

The QA role in 2026 has not disappeared — it has been restructured. The mechanical parts of the job (selector maintenance, manual click-throughs to verify a release, writing automation scripts after a feature ships) have moved to AI systems and to the AI coding agents that wrote the feature in the first place. What remains, and grows in importance, is the human work that defines what quality means: deciding what to test, reviewing autonomously-generated tests, owning quality policy, running exploratory testing that no agent thought to do, and handling the regulated and judgment-heavy parts of release decisions. The result is a QA role with six new responsibilities, five distinct career tracks, and a different mix of required skills than the 2015 baseline. This guide walks through each, with concrete patterns for QA engineers transitioning into the new role and engineering leaders hiring for it.

Before the new responsibilities, a clear picture of what the AI era keeps and what it transforms:

| Aspect of the QA role | 2015 baseline | 2026 reality |

|---|---|---|

| Define what "quality" means for the product | QA | QA — unchanged |

| Decide which user journeys are mission-critical | QA | QA — unchanged |

| Author E2E tests for new features | QA team | Engineer or coding agent, QA reviews |

| Maintain selectors as the UI changes | QA, 40-60% of hours | AI Fixer / self-healing, QA approves PR patches |

| Run regression manually | QA team | CI gate, QA owns the metric, not the click-through |

| Triage failed CI runs | QA team | AI clusters failures, QA confirms categorization |

| Exploratory testing for surprising paths | QA | QA — unchanged, and more important |

| Decide release-go / no-go | QA + eng lead | QA + eng lead — unchanged |

| Handle regulated business logic | QA | QA — unchanged, and more important |

The pattern: the judgment work stays with humans. The mechanical work moves to AI. The QA role evolves toward the judgment-dense end of the spectrum, not away from QA.

Worth being explicit about what's being replaced. The 2015 QA engineer typically owned:

That role was bottlenecked by a fundamental ratio: a QA engineer could maintain ~100–200 E2E tests effectively. Past that, maintenance overhead equaled authoring throughput. Net coverage growth was effectively zero. See the human QA bottleneck in agent-first engineering teams.

That ratio is what the AI era breaks — and the QA role evolves into the work that the broken ratio used to crowd out.

The QA engineer (or QA function) owns the written policy that defines what "quality" means for the product. In 2015 this was usually tacit. In 2026, with AI agents authoring tests autonomously, it has to be explicit. The policy answers:

This is governance work, owned at the QA leadership layer. The output is a living document reviewed quarterly. See enterprise-ready agentic QA: a practical checklist.

A 2026 test strategy is six-component: scope, authoring model, healing posture, gates, coverage targets, ownership. QA owns the strategy document — not the tactical execution. See AI-native test strategy in 2026.

In practice: QA partners with engineering leadership to set the operating model, then signs off on quarterly reviews when KPIs breach or new tooling enters the stack. The day-to-day work of running the strategy belongs to engineers and coding agents.

The largest net-new responsibility. When AI coding agents author tests via MCP or SDK, someone has to review and approve those tests before they become regression gates. That someone is typically QA:

This is not rubber-stamping. It is the equivalent of code review for tests, applied to a non-human author. See the testing layer for AI coding agents and Shiplight MCP Server.

The bug class that AI is worst at catching is the one that exists in flows nobody documented. A QA engineer manually exploring the application — trying weird inputs, racing two windows, abandoning a checkout halfway — surfaces bugs no autonomous explorer prioritizes. In 2026 this work is more important, not less, because:

The 2026 QA engineer probably spends a larger fraction of the week on exploratory testing than the 2015 QA engineer did, even though headcount is flat.

When a test fails intermittently without code changes, what happens? In 2015, a human investigated each one. In 2026, AI clusters and categorizes failures automatically — but policy still belongs to QA:

See test flakiness budget, quarantine test, and from flaky tests to actionable signal.

For products in finance, healthcare, payments, or regulated industries, some of the testing decisions cannot be delegated to AI even when the technology could plausibly handle them. Examples:

These are the decisions auditors will ask about. They belong to a named human, and that human is typically QA leadership or a specialist QA engineer. See best self-healing test automation tools for enterprises.

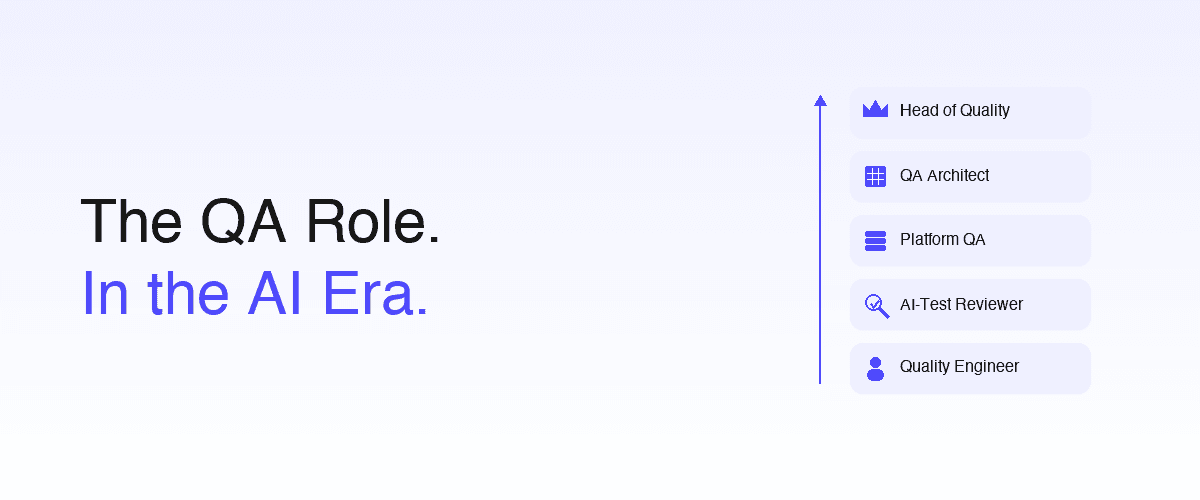

The traditional ladder ("Junior QA → Senior QA → QA Manager") still exists, but five distinct career tracks are emerging as the role specializes:

Owns the operating model: test strategy, tooling decisions, gate design, metric dashboards. Reports to engineering or product leadership. Heavy on judgment, light on hands-on test writing. Outputs: strategy documents, RFCs, quarterly reviews.

The QA engineer who specializes in reviewing autonomously-generated tests at scale. Becomes expert in the failure modes of the team's coding agents and testing platform. Outputs: approved test PRs, quality-drift reports, fine-tuning feedback for the test-generation pipeline.

The hands-on individual contributor role that combines exploratory testing, ad-hoc automation, and feature-level quality ownership. Looks closest to a 2015 senior QA engineer but spends less time on selector maintenance and more on exploration. Outputs: bug reports, exploratory test sessions, automation for flows agents missed.

Owns the testing infrastructure itself — CI gates, runner pools, observability for the test suite, integration with the AI testing platform. Often comes from an SRE or platform-engineering background and adds the QA lens. Outputs: gate uptime, test-runner cost optimization, integration with Shiplight Plugin, AI SDK, MCP Server.

The executive role. Owns the quality-function P&L, hiring, vendor relationships, regulatory and audit interface. Translates between product, engineering, and external stakeholders (compliance, customers, auditors).

Most teams won't have all five named explicitly, but a single QA engineer often plays two or three of these depending on org size.

The skill mix shifts. Three buckets:

Grow in importance

Stay important

Shrink in importance

git, reviewed in PR)The directional change: more time on what should be true and less time on getting tests to pass. See the future of QA for the conceptual framing.

Three common org models in 2026:

The single failure mode all three avoid: separating QA physically from the engineers who ship code. The 2026 default keeps QA close enough to the build loop to see failures as they happen, regardless of reporting line.

A few things the QA role is sometimes mischaracterized as in the AI era — none of them accurate:

For practitioners already in QA, the practical transition path:

Month 1 — Build the new toolchain fluency. Get comfortable reading intent-based YAML tests, reviewing PRs with auto-generated tests, navigating a CI gate dashboard.

Month 2 — Move work up the stack. Less time on selector maintenance (let the AI Fixer handle it); more time on test strategy, policy authorship, and reviewing agent-generated tests.

Month 3 — Specialize. Pick a track (architect / reviewer / quality engineer / platform / head). The five tracks above are differentiated enough that most engineers gravitate toward one within a few months.

Quarter 2+ — Lead. Once fluent in the new model, the highest-leverage move is teaching it. Document the team's quality policy. Run "test review" guild sessions. Mentor engineers on intent-based test authoring. The QA engineer who can articulate why quality decisions are made the way they are is the one who scales as the org grows.

Two patterns:

Hiring an experienced QA engineer: Look for evidence of judgment work, not just automation throughput. Strong signals: has authored a test strategy document; has reviewed AI-generated tests; has owned a quality policy. Weak signals: number of Playwright tests written, certifications, time-in-role.

Promoting an engineer into QA: A backend or frontend engineer who shipped a feature with thoughtful test coverage and clear documentation often translates well into a quality-engineer track. Lower hiring risk than going external; same competency profile.

The interview should test review and judgment, not pure automation skill. Ask candidates to review a deliberately-flawed agent-generated test. Ask them to draft a quality policy for a hypothetical product. Ask them about a time they pushed back on shipping. Automation coding skill matters but is no longer the bottleneck.

The QA role in 2026 owns the judgment-dense parts of quality work: defining what quality means for the product, owning the test strategy, reviewing autonomously-generated tests, running exploratory testing, setting flake and quarantine policy, and handling regulated-domain decisions. The mechanical parts of the 2015 QA role — selector maintenance, manual regression click-throughs, writing automation scripts after features ship — have moved to AI systems and to the AI coding agents that built the feature.

No. AI replaces specific tasks within the QA role (selector maintenance, manual clicking, test authoring), not the role itself. Most teams report stable QA headcount with 5–10× coverage growth — the same number of people doing higher-leverage work. The 2026 QA engineer spends more time on exploratory testing, test strategy, agent oversight, and quality policy than the 2015 QA engineer did, not less.

Six new (or newly emphasized) responsibilities: (1) quality policy authorship, (2) test strategy ownership, (3) oversight of AI-coding-agent-authored tests, (4) exploratory testing, (5) flaky-test and quarantine policy, (6) regulated-domain judgment. Each is a judgment-heavy task that does not delegate well to AI, even in 2026.

Growing in importance: quality policy authorship, test review at scale, exploratory testing methodology, cross-functional communication, fluency in intent-based testing and MCP-based agent integration. Staying important: domain knowledge, bug-reporting discipline, test data management. Shrinking in importance: hand-writing selectors, manual regression click-through, defect-triage volume. The shift is from "getting tests to pass" toward "defining what should be true."

Five distinct tracks: (1) QA Architect — owns operating model and strategy; (2) AI-Test Reviewer — specializes in reviewing autonomously-generated tests; (3) Quality Engineer (IC) — hands-on exploratory and feature-level quality work; (4) QA Platform Engineer — owns testing infrastructure and tooling; (5) Head of Quality — executive role, owns the function. Most engineers gravitate to one or two of these within months of working in the new model.

Three common 2026 models: (1) embedded QA — one engineer per feature team, pairs closely with engineers and the coding agent; (2) quality guild + platform QA — central tooling team plus distributed "quality champions" who are often engineers; (3) centralized QA function — separate function reporting to CTO, common in regulated industries. The single anti-pattern all three avoid: physically separating QA from the engineers who ship.

When an AI coding agent authors tests via an SDK or MCP server (like Shiplight MCP), QA reviews those tests in PR — the same way an engineer reviews code. Specifically: catching hallucinated assertions, confirming the test actually covers the claimed user journey, and periodically auditing the agent-generated portion of the suite for quality drift. This is the largest net-new responsibility in the 2026 QA role.

Yes — more than before, not less. AI handles the regression-suite floor (intent-based tests, self-healing, autonomous-flow execution), which frees QA from the 40–60% maintenance overhead that crowded out exploratory work. Exploratory testing also finds the bug class AI is worst at catching: surprising flows nobody documented. Findings from exploratory sessions become new candidate regression tests, feeding back into the automated suite.

Three months: (1) build toolchain fluency — get comfortable with intent-based YAML, PR-based test review, and CI-gate dashboards; (2) shift work up the stack — less selector maintenance, more strategy and policy authorship; (3) specialize — pick one of the five career tracks. After that, the highest-leverage move is teaching the new model to others on the team.

Look for evidence of judgment work, not automation throughput. Strong signals: candidate has authored a test strategy document, has reviewed AI-generated tests, has owned a quality policy or governed a flake budget. Weak signals: number of Playwright tests written, generic certifications, time-in-role at previous jobs. Interview for review skill and quality judgment — give candidates a flawed AI-generated test to critique, ask them to draft a policy, ask about a time they pushed back on shipping.

---

The 2026 QA role demands more judgment, more communication, more cross-functional skill — and less mechanical execution. The engineers who thrive in it are the ones who saw the selector-maintenance treadmill as a constraint, not a job description, and who took the freed time to invest in policy, strategy, and review skill. The teams that thrive are the ones that resisted the temptation to "automate QA away" and instead used AI to amplify what the human QA function does best.

For QA practitioners building the new toolchain fluency, Shiplight AI is the system most teams use to operationalize the six new responsibilities: YAML Test Format for reviewable intent-based tests, AI Fixer for self-healing as default, MCP Server for agent oversight at scale, and Cloud runners for PR-time gates that surface the right judgment calls at the right time. Book a 30-minute walkthrough and we'll map the six new responsibilities to the workflows your team already runs.