Test Harness Engineering for AI Test Automation (2026 Guide)

Shiplight AI Team

Updated on May 27, 2026

Shiplight AI Team

Updated on May 27, 2026

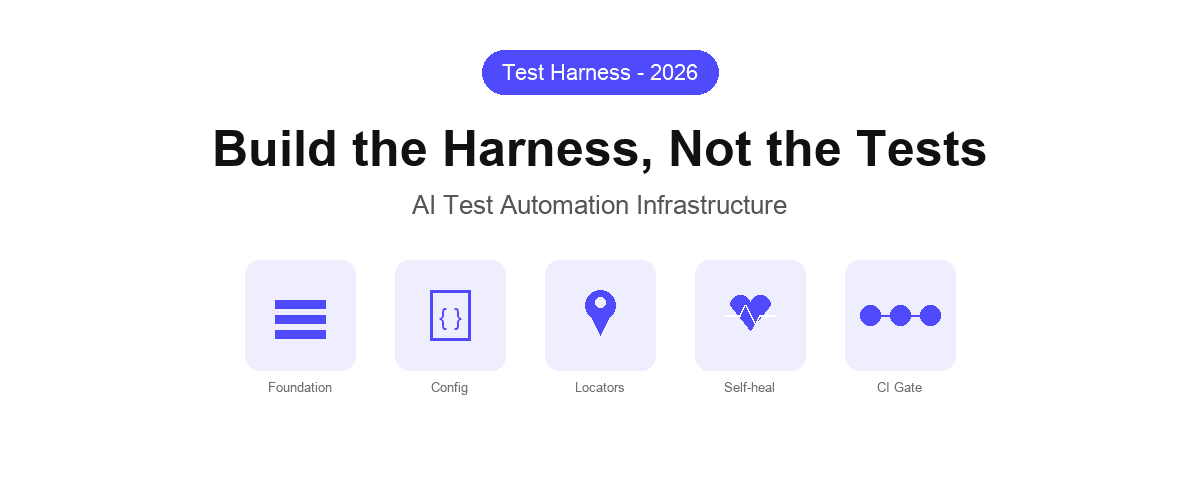

A test harness is the infrastructure layer that surrounds your tests: the fixtures, configuration, environment management, data setup, and execution scaffolding that make individual tests runnable, repeatable, and meaningful. In traditional testing, building a good harness is an engineering discipline in its own right. In AI test automation, it is the critical differentiator between a fragile prototype and a production-grade quality system.

As AI coding agents accelerate feature delivery, the harness needs to keep pace. This guide covers the core techniques for test harness engineering that work with AI test automation — not against it.

A test harness is everything that is not the test itself. It includes:

In manual testing, the harness is implicit — testers carry this context in their heads. In automated testing, the harness is explicit and must be maintained as carefully as the tests themselves. In AI test automation, where tests are generated at machine speed and the application changes frequently, the harness design determines whether your test suite grows sustainably or collapses under its own weight.

Traditional test harnesses are built around a stable, human-paced development cycle. The harness assumes:

AI coding agents break all four assumptions. An agent refactors a component in minutes, renames classes across files, and restructures DOM hierarchies as a side effect of implementing an unrelated feature. Tests that depend on #submit-btn or .checkout-form__total fail constantly — not because the application broke, but because the locator cache is stale.

The result: teams either cap their test suites at a size they can manually maintain, or they accept a permanent background noise of broken tests that get disabled rather than fixed. Neither outcome is acceptable for teams shipping at AI speed.

The most important structural decision in a modern test harness is how tests express what they are testing. Traditional harnesses store locators as the source of truth. Intent-based harnesses store the user goal as the source of truth and treat locators as a derived, cached artifact.

In practice, this means each test step describes what a user is doing — not how the DOM is currently structured:

goal: Verify checkout flow completes successfully

base_url: https://app.example.com

statements:

- URL: /cart

- intent: Click the Proceed to Checkout button

- intent: Fill in shipping address with test data

- intent: Select standard shipping

- intent: Click Place Order

- VERIFY: Order confirmation number is visibleWhen the UI changes — a button moves, a class renames, a container restructures — the intent remains valid. The harness resolves the correct element against the current page state rather than failing on a stale selector. This is the foundation of the intent-cache-heal pattern: intent as the authoritative definition, cached locators for execution speed, AI resolution when the cache misses.

A test harness that lives outside version control is a harness you cannot trust, audit, or reproduce. The configuration layer — environment URLs, test suites, execution parameters — should live in your repository alongside application code.

YAML-based test configuration makes this natural. Each test file is a human-readable YAML document that specifies the goal, the base URL, and the sequence of user actions. The harness configuration is a separate YAML file that references these test files and defines execution parameters:

suite: checkout-regression

environment: staging

base_url: https://staging.example.com

tests:

- tests/checkout/full-flow.yaml

- tests/checkout/guest-checkout.yaml

- tests/checkout/promo-code.yaml

parallelism: 4

fail_fast: falseThis approach gives you several properties that matter at scale:

Speed and resilience are usually in tension in test harnesses. Fast tests use cached locators. Resilient tests use AI resolution. A well-designed harness does not choose — it uses both, with a fallback strategy.

The pattern:

This architecture means the harness is deterministic and fast in the common case (the UI has not changed) and resilient in the edge case (the UI has changed). The self-healing layer is invoked rarely, keeping execution speed predictable.

For AI-driven development workflows, where the application changes on every agent commit, this is the only sustainable approach. See self-healing vs. manual maintenance for a detailed comparison of the maintenance burden across approaches.

AI coding agents generate tests rapidly, but they do not have visibility into shared fixture state. A naive harness lets tests share mutable state: one test logs in, creates a record, and leaves it for the next test. This works until two tests run in parallel and corrupt each other's state.

Robust harness engineering for AI test automation requires fixture isolation:

For authentication specifically, the most reliable pattern is to log in once per test run, save the session state, and reuse it across tests in that run — without re-authenticating on every step. Shiplight's harness supports session state persistence out of the box, which is particularly important for testing SSO, 2FA, and magic link flows.

A test harness is only valuable if its results are actionable. The final layer of harness engineering is integrating execution results into your CI pipeline as a blocking gate — not an advisory report.

The harness should:

GitHub Actions integration for a YAML-based harness looks like this:

name: E2E Regression Suite

on:

pull_request:

branches: [main, staging]

jobs:

e2e:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Run E2E harness

uses: shiplight-ai/github-action@v1

with:

api-token: ${{ secrets.SHIPLIGHT_TOKEN }}

suite-id: ${{ vars.SUITE_ID }}

fail-on-failure: trueWhen an AI coding agent opens a PR that breaks a test, the CI gate catches it. The agent receives the structured failure output and can diagnose and fix the issue before the PR reaches human review. This closes the AI-native QA loop: write, verify, gate, fix — without waiting for a human to click through the feature.

A complete test harness does not need to be built all at once. The practical sequence:

Each step adds value independently. A single self-healing test wired into CI is more valuable than a comprehensive suite that runs manually on a schedule.

A test framework provides the primitives for writing and running tests (assertions, test runners, reporters). A test harness is the application-specific layer built on top: the fixtures, configuration, authentication helpers, and execution infrastructure specific to your application. Playwright is a framework. The YAML configuration, session fixtures, and CI integration that surround your Playwright tests are the harness.

Intent-based tests define what the user is doing rather than which DOM element to interact with. When the UI changes — a class renames, a component restructures, a button moves — the intent remains valid and the harness resolves the correct element automatically. This eliminates the most common source of harness maintenance: updating stale selectors after UI changes.

Two techniques: self-healing locators that resolve from intent when the cached locator fails, and intent-based test definitions that remain valid through UI restructuring. Together, these mean the harness does not need to be updated every time the agent refactors a component. The intent-cache-heal pattern is the practical implementation of both.

Yes. Intent-based YAML test files can be authored by humans, generated by AI agents, or produced by a combination. The harness executes them identically. This is important for teams that use AI agents to generate initial test coverage and then refine tests manually.

A well-designed harness should support GitHub Actions, GitLab CI, Azure DevOps, and CircleCI through standard API-based triggers. Shiplight's harness integration works with all four through either a native GitHub Action or API-based triggers for other pipelines.

---

References: Playwright Documentation, GitHub Actions documentation, Google Testing Blog