Software Testing Basics in 2026: What Changed and How to Catch Up

Shiplight AI Team

Updated on May 11, 2026

Shiplight AI Team

Updated on May 11, 2026

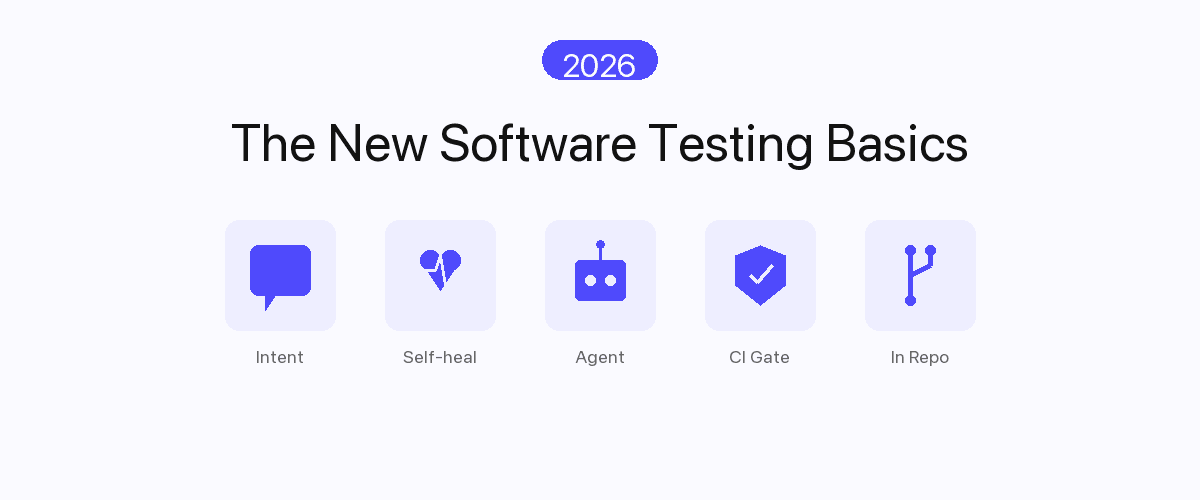

Software testing basics in 2026 look almost nothing like the basics taught five years ago. The unit of authorship is user intent, not DOM selectors. Self-healing is the default, not a paid add-on. Verification happens inside the AI coding agent's session, not in a separate QA cycle days later. And the test suite is judged on machine-speed feedback, not on lines of Playwright. If your "basics" still mean "record a click path, watch it break on the next refactor, repeat" — this is the catch-up.

---

The reason the basics shifted isn't that QA invented new theory. It's that the rest of the stack changed. AI coding agents like Claude Code, Cursor, and OpenAI Codex ship features in the time it used to take to write the test plan. Pull requests multiplied. UI changes became continuous. The 2020-era testing playbook — write Playwright, run on merge, fix selectors when they drift — collapsed under the new throughput.

This post is the 2026 replacement playbook: five basics that actually hold up, what each one means concretely, and which Shiplight feature implements it so you can adopt them in the same week you read about them.

Read the rest for the why behind each, and how to upgrade your existing stack without rewriting it.

| Dimension | 2020 Software Testing Basics | 2026 Software Testing Basics |

|---|---|---|

| Authored as | Playwright/Cypress/Selenium code, bound to CSS/XPath | Natural-language intent ("click checkout"), bound to user actions |

| Survives UI change? | No — every refactor breaks selector bindings | Yes — intent re-resolves against the current DOM (intent-cache-heal pattern) |

| Authored by | A human engineer at typing speed | The AI coding agent in the same session, at agent speed |

| Verification runs | Nightly or on merge | On every pull request, in real browsers, before review |

| Maintenance cost | 40–60% of QA time on selector upkeep | Near-zero — auto-heal handles UI drift; humans approve patches |

| Owned by | A QA team, separate cycle | The product engineer who shipped the change |

| Failure signal | Red CI run with stack trace, often flaky | Replay video + DOM snapshot + actionable diff per failure |

| Coverage shape | Whatever someone had time to write | Whatever the agent generated during the build, plus regression carry-forward |

If your shop is still in the left column on most rows, you're not "behind on the latest stuff." You're operating below the 2026 floor. The good news: these aren't incompatible — you can adopt them incrementally without a rewrite. Each basic below stands alone.

The single biggest shift in 2026 software testing basics is how a test is written. The old default — bind a step to a CSS selector or XPath — turned every test into a tripwire for refactors. Rename a class, swap a component library, A/B test a button label, and silent test failures stack up overnight.

The 2026 default is the opposite: a test step is a natural-language statement of user intent, and the runner resolves it to a DOM element at execution time.

- intent: Add the first product to the cart

- intent: Proceed to checkout

- VERIFY: order confirmation page shows order numberCompare to:

await page.locator('button.btn-primary[data-testid="add-to-cart"]').click();

await page.locator('a[href="/checkout"]').click();

await expect(page.locator('h1#order-confirmation')).toContainText(/Order #\d+/);The Playwright version is precise — and brittle. The YAML version is portable across refactors and readable by a non-engineer reviewing a PR. See intent-first E2E testing guide for the deeper rationale.

Shiplight feature. Shiplight YAML Test Format is the intent-based test language. Tests are plain YAML files committed alongside source — code-reviewable, diff-able, grep-able, version-controlled. No proprietary UI to learn, no vendor lock-in on the test definitions themselves.

In 2020, "self-healing tests" was a premium feature. In 2026, it's the floor. The reason is throughput: if AI coding agents ship 10x more UI changes per week, a test suite that requires human selector maintenance is a permanent bottleneck.

What "self-healing as default" actually means in practice:

<button> to an <a>. The next run still finds it, by text + role + position, and the test passes.That last property is the one most "self-healing" tools get wrong. Silent auto-edits to tests destroy auditability. Patches reviewed in PR preserve it. Read more in how to fix flaky E2E tests and self-healing vs manual maintenance.

Shiplight feature. Self-healing is built into Shiplight Plugin — every test run uses the AI Fixer to resolve intent against the current DOM. When the Fixer can't resolve a step confidently, it proposes the patch as a reviewable diff. You keep the audit trail; you lose the manual selector maintenance.

This is the basic that didn't exist in 2020 at all, because the trigger condition — autonomous AI coding agents writing production code — didn't exist either. In 2026, agent-native autonomous QA is what closes the loop.

Here's the failure mode it solves: a coding agent generates a feature, the test suite passes (because the agent never touched the tests), the PR lands, and three days later a customer reports the new flow is broken. The agent never verified its own output in a real browser. It only ran whatever tests already existed.

The 2026 basic: the coding agent calls testing as a tool, in the same session, before the PR is opened. It generates an intent-based test for the feature it just built, runs it in a real browser, sees the actual rendered behavior, and only then signals the PR is ready. See the testing layer for AI coding agents for the pattern.

This requires two things from the testing tool:

The shorthand: if your testing tool doesn't expose itself to the agent, the agent ships code your tool never saw.

The 2020 norm was a nightly full-suite run plus a smaller smoke check on merge. The 2026 norm is every pull request runs the relevant tests in a real browser before review, and merges are blocked on failure.

Why the shift: with AI-generated code making up the majority of PRs in many teams, the latency of "find out tomorrow that the build broke" is wildly out of step with the latency of "the agent wrote and shipped this in 4 minutes." Quality has to gate at the same speed quality is being produced.

What a 2026 CI gate actually checks:

This is what a practical quality gate for AI pull requests describes in detail. The output is binary: the PR is mergeable or it isn't, on signals the reviewer can trust.

Shiplight feature. Shiplight Cloud runners hook into GitHub Actions, GitLab CI, and CircleCI. Test runs produce replay video, DOM snapshots, and a structured failure diff per step — not a wall of stack trace. See E2E testing in GitHub Actions: setup guide for the wiring.

The quiet basic — the one people only realize they were missing when they try to migrate off a vendor — is where the test definitions actually live. In the 2020 SaaS-testing era, tests lived in the vendor's UI: drag-and-drop builders, proprietary script formats, screenshots in their cloud. Leaving the vendor meant rewriting from scratch.

In 2026, the basic is: tests live in your repo, as plain text, reviewed in PR, owned by the same engineers who own the feature. Properties this enables:

git log, with the same author attribution and revert path as any other file.This is why YAML-based testing is the format we picked. It's text. It diffs cleanly. It travels.

Adopting all five basics in one quarter is a rewrite. You don't need a rewrite. Here's the staged path used by teams in the 30-day agentic E2E playbook:

Week 1 — make new tests intent-based. Stop writing new Playwright. For every new feature, the engineer (or the coding agent) writes the test in YAML. Existing Playwright keeps running unchanged.

Week 2 — enable self-healing on the YAML suite. Run intent tests through Shiplight Plugin with self-healing on. Approve patches in PR. Measure the maintenance-time delta vs the legacy Playwright suite — typical teams see 30–50% reduction inside two weeks.

Week 3 — wire CI gates. Add Shiplight to your pull-request pipeline. Block merge on failure for the touched flows. Keep the nightly Playwright run as a safety net during transition.

Week 4 — give the coding agent access. Install the Shiplight MCP server and let your AI coding agent generate and run tests for the features it builds. The agent now closes its own loop. See agent-first testing.

Month 2+ — port the legacy suite opportunistically. Whenever a Playwright test breaks and needs maintenance anyway, rewrite it in YAML instead of patching the selector. The legacy suite shrinks naturally; no big migration project needed.

A few perennials that didn't make the "basics" cut, with the 2026 takes:

The 2026 basics are: (1) intent-based authoring instead of selector-bound code, (2) self-healing as the default, not a premium add-on, (3) agent-native verification so AI coding agents test inside their build session, (4) PR-time CI gates that block merges on real-browser failures, and (5) test definitions stored as plain-text artifacts in the repo, not in a vendor UI.

In 2020, testing meant Playwright/Cypress/Selenium code bound to CSS selectors, run nightly, maintained by a QA team that spent 40–60% of its time on selector upkeep. In 2026, tests are natural-language YAML committed in git, self-heal across refactors, run on every pull request, and are authored by the same engineer or AI coding agent that shipped the feature. Maintenance cost drops by an order of magnitude.

No. The recommended path is incremental: start writing new tests in the new format, enable self-healing on the new suite first, and let the legacy Playwright suite shrink opportunistically (rewrite a test when it breaks, instead of patching the selector). Most teams reach majority-intent-based coverage in 8–12 weeks without a dedicated migration project.

It means the AI coding agent — Claude Code, Cursor, Codex, or a custom orchestration — calls testing as a tool inside the same session it wrote the code. It generates a test for what it just built, runs that test in a real browser, sees the rendered behavior, and only signals the PR is ready if the test passes. Without this, the agent ships code your test suite never verified.

Intent-based authoring → YAML Test Format. Self-healing → Shiplight Plugin with built-in AI Fixer. Agent-native verification → Shiplight AI SDK and MCP Server. PR-time CI gates → Shiplight Cloud runners. Test ownership in the repo → YAML files committed alongside source.

The good implementations don't silently rewrite. They emit a proposed patch as a reviewable diff in your PR. A human approves the change the same way they'd review any code change. The audit trail stays in git log. Tools that auto-edit tests without review are the ones that get a bad reputation — pick the ones that surface every change to a human reviewer.

---

The five basics above aren't aspirational. They're the floor your stack should be at before mid-2026. If you're starting from a 2020-era setup, book a 30-minute walkthrough — we'll map your current suite to each basic and show you what an incremental upgrade looks like for your codebase.